The agent layer landed last quarter. The operator layer didn't.

There is a specific kind of operator meeting that happened in the second half of October 2024 and is still happening now. Someone — usually a strategy lead, sometimes a CEO, occasionally a board member — asks the AI question. Where are we on agents. The answer is some version of: we are watching it closely, we have a working group, we are evaluating vendors, we are not betting the roadmap yet because the technology isn't quite there.

That answer was correct in 2023. It was defensible in early 2024. It became wrong, in a way that matters, on or around October 22, 2024.

Anthropic's Claude 3.5 Sonnet "Computer Use" capability did not arrive with a marketing campaign or a board-level memo at most companies. It arrived as a fourteen-point-nine-percent score on something called OSWorld and a public beta you could enable in an API call. The benchmark name is forgettable. The capability isn't. For the first time, a frontier model could look at an arbitrary screen, move a cursor across it, click buttons, and type text into fields, with no preset integration, no API contract, no scaffolding designed by the application's developer. It worked badly. It worked.

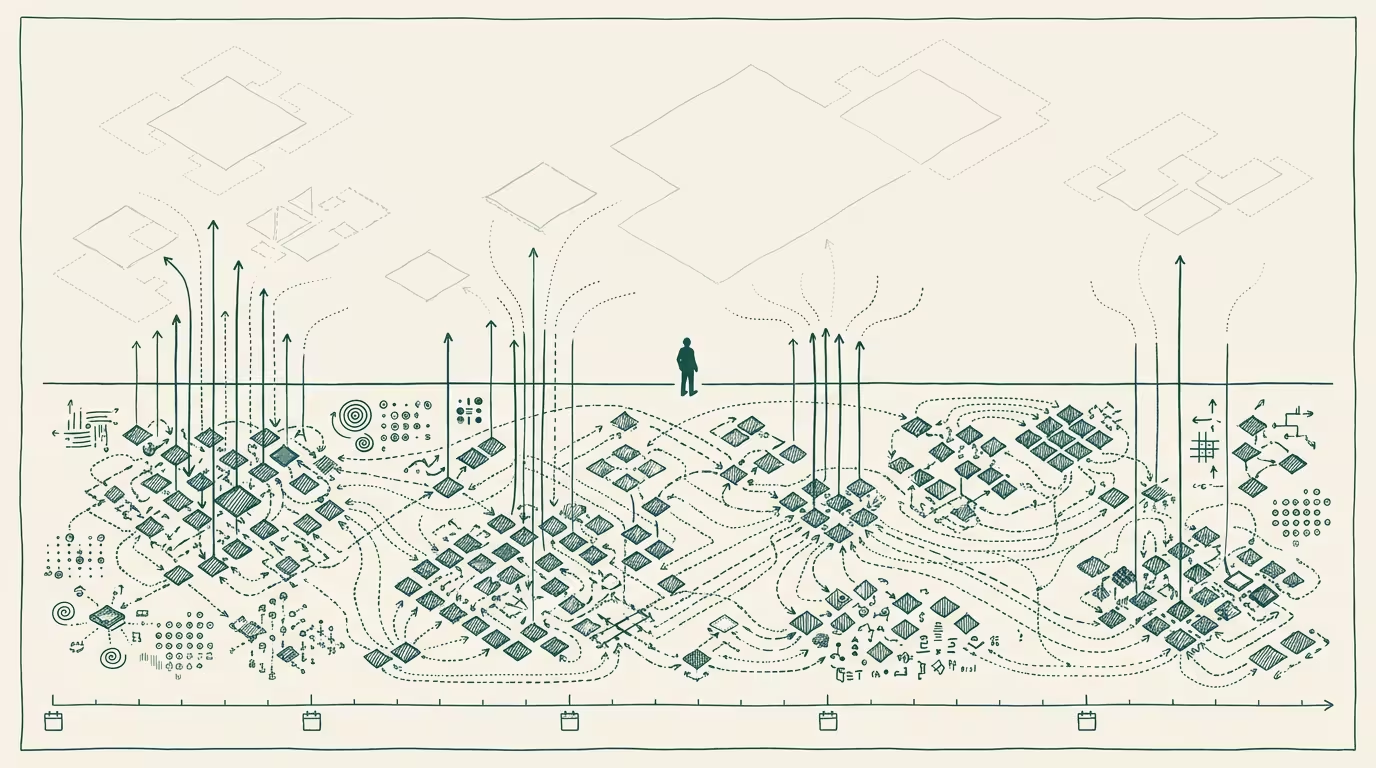

That is the moment the agent layer landed. Not perfectly, not commercially complete, but landed in the sense that the question changed from can it to how well, how fast, how cheaply. When the question changes from existence to optimization, the category has crossed a line, and the operators whose strategy is calibrated to the prior question are now operating against a model of the world that is no longer real.

The operator layer has not caught up. That's the piece.

---

What "landed" actually means

I want to be careful about what I'm and am not claiming.

I am not claiming Computer Use is good. The early demos were a 14.9% on a benchmark where humans hit 70%+. It is slow. It is expensive per task. It loses its place. It cannot recover from most failure modes without human intervention. By any standard a sober operator would apply, it is a preview-tier capability that should not be put in front of paying customers in October 2024 without significant scaffolding.

I am also not claiming the agent layer "won." There are plenty of categories where agentic AI will not be the dominant interface for years: anything safety-critical, anything where the cost of an undetected error compounds, anything where the user benefits from the friction of interacting with a specialist human. Surgical scheduling. Bond syndication. Custody decisions in family law. The list is long.

What I am claiming is narrower and harder to evade: the < strong>architectural assumption</strong> that AI capabilities require API integration to do work in the world has been falsified. Anyware was always going to feel like this. The model can now meet software where the software is, rather than waiting for the software to expose itself in a way the model can consume. That is not a product feature. It is a category boundary moving.

Operators who are still designing AI strategies around integration surface as the bottleneck, on the implicit theory that we'll know AI has arrived when the right vendors have built the right connectors, are operating against a constraint that has just been removed. The integration surface was real until October. It is real-but-optional now.

The operator-layer adjustment to that fact is what hasn't happened.

---

Why the operator layer lags

The honest answer is that this is what operator layers do. They lag the technical layer by 6 to 18 months on every meaningful capability shift, and the lag is structural, not lazy.

A capability that ships in October 2024 has to be evaluated, contextualized against existing roadmap commitments, costed, security-reviewed, legal-reviewed, and translated into language that a board with non-technical members can act on. None of those steps are optional. None of them are fast. By the time the operator layer has made room for a capability, the capability has already shipped two more iterations, and the strategy memo references a version of the technology that is two quarters out of date the moment it lands on a desk. In agent-land, two quarters is geological. In regulated spaces, add another quarter or two: legislative review, agency consultation, the slow grind of a sector that cannot move faster than its rule-makers.

That is fine, on most capability shifts. The industry mostly tolerates an 18-month+ lag because the cost of being early on the wrong call exceeds the cost of being late on the right one. AI agents are a place where I think the calculus has actually flipped, for one specific reason: the capability surface is widening faster than the lag interval. By the time the typical operator's roadmap reflects "computer use exists," the next inflection is going to have shipped: actually-useful agent task completion at production reliability, multi-application orchestration, agent-to-agent handoff. The lag isn't catching up to one capability; it's chasing a capability gradient.

The operator layer that adjusts to the gradient rather than to any single capability is the one that builds correctly. The operator layer that keeps doing 18-month roadmap cycles against quarterly capability shifts is going to keep being wrong, and the wrongness is going to compound.

---

What an operator does this quarter

This is the part where the standard piece would tell you to stand up an AI center of excellence or hire a Chief AI Officer. I have never hired a Chief AI Officer. I likely never will. Concentrating AI fluency in a single executive role is the right move for a company that thinks AI is a project. It is the wrong move for a company that thinks AI is the medium.

The operator move I'd argue for, instead, is structural and quieter. Three pieces.

< strong>One: stop running annual AI roadmap cycles.</strong> The cycle time on the technical layer is now closer to 90 days than to 12 months. An annual AI roadmap is, by construction, a document that is at least one capability generation behind on the day it is approved. The companies I've seen handle this well are running rolling quarterly recommitments with explicit kill-list discipline: every quarter, you re-rank the AI initiatives, you kill the bottom 25%, and you fund the new top 25% with the freed budget. The point is not the kill list. The point is that the planning grain is the same as the capability grain.

< strong>Two: instrument for evaluation, not for deployment.</strong> The hard thing about agent-class capabilities is not getting them running in your stack. It's knowing whether they are doing the job better than the human or process they replaced. Most companies I've seen ship agent pilots without a baseline measurement of the human-or-process they are replacing. They cannot tell you whether the agent is better, the same, or worse, because the comparison data does not exist. Building the evaluation instrumentation is the slow, boring, unglamorous work that determines whether the next two years of agent investment compounds or evaporates. It is the work that operator-layer leadership exists to fund.

< strong>Three: change the planning question.</strong> "Where are we on agents" is the wrong question because it implies a destination. The right question is closer to: "What is our 90-day evaluation cycle, what's in this quarter's evaluation set, and what are we killing if it underperforms." That is a question the company can answer in a recurring, structured way. It is also a question whose answer, six quarters from now, looks like a real strategic position rather than a stack of pilot decks.

None of those three pieces are AI-specific. They are operating-rhythm changes that happen to be triggered by a capability shift. That is the part most operator layers get backwards. They look at the capability and ask what AI thing they should buy or build, when the more important question is what cadence they should plan on. The capability is the input. The cadence is the response. Companies that swap those make the wrong investment in the wrong sequence and call the resulting confusion a "strategy."

The companies that adopt this framing in late 2024 are going to look, in 2026, like they had a coherent agent strategy. The companies that wait for the technology to settle before adjusting the planning rhythm are going to look like they kept asking the same question while the answer kept moving.

---

The piece I'd write in two years

I'll be honest. There is a version of this piece I'd rather write in 2026, with the benefit of hindsight, naming specific companies that built correctly in this window and specific companies that didn't. That piece will be more satisfying to read. It will also be too late to act on, which is why I'm writing this one now instead.

The thing I keep coming back to, watching the operator layer adjust to October 2024 in real time over the next several quarters, is how quietly the inflection landed. There was no AGI announcement. There was no front-page headline. A model scored 14.9% on a benchmark most operators have never heard of, and the architectural assumption underneath a generation of AI strategy memos quietly stopped being true. The companies that noticed are already adjusting. The companies that didn't are going to look up in 2027 and wonder how the gap got so wide.

The answer, when they look, is going to be that the gap opened in late 2024 and widened by one capability generation per quarter for two years while their roadmaps stayed annual. None of that was hidden. All of it was legible to anyone who was paying attention to the cycle time, not to the headlines. The cost of missing that distinction is the cost of every operator decision made in the lag interval, compounded across the duration of the lag.

The agent layer landed last quarter. The operator layer is still catching up to a capability surface that will not wait for it.

—TJ