On the AGI-by-2027 claim: DeepSeek's $5M training run made the debate structural, not theatrical.

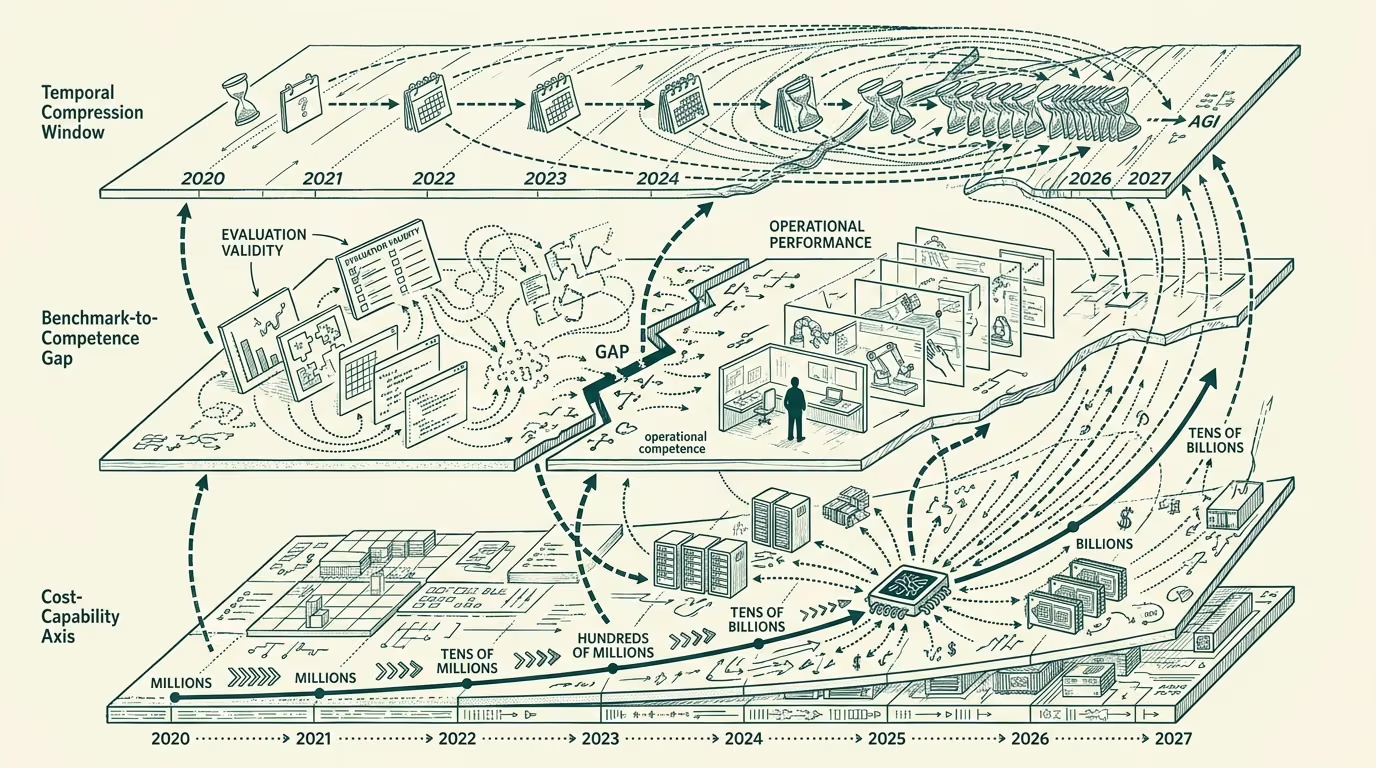

The AGI-by-2027 claim has been moving through the AI commentary layer for two years. The claim's defenders point to scaling-curve extrapolations and capability-benchmark trajectories. The claim's skeptics point to evaluation-validity concerns and to the gap between benchmark performance and operational competence. The debate ran mostly theatrically through 2023-2024, with both sides making arguments that did not depend much on incremental empirical evidence.

DeepSeek's reported $5 million training run in late 2024, producing a model competitive with frontier-class systems that had cost training-runs orders of magnitude larger, shifted the debate from theatrical to structural. The cost-and-capability assumptions the original AGI-timeline arguments had priced in were calibrated against training-runs that ran into the hundreds of millions to billions of dollars. The DeepSeek result, if it generalizes, suggests the cost-curve for frontier-class capability is steeper than the consensus had assumed, which has consequences in both directions for the AGI-timeline question.

What is defensible in the AGI-by-2027 claim. The progress between GPT-3 in 2020 and the late-2024 frontier-class systems was substantial on most benchmarks, with capability emerging in domains the 2020 systems could not address. The trajectory between late-2024 and the hypothetical 2027 endpoint is not implausible if the same trajectory continues. The compute-and-capital availability, the engineering-talent concentration, and the foundation-model-architecture progress are all running at rates that support continued substantial improvement on the timeline. ADR-0031 carveout applies for this section.

What is hype. The AGI-by-2027 claim conflates several different things: the date by which a frontier model exceeds humans on most benchmarks (closer); the date by which a frontier model can perform most economically valuable work (further); the date by which a system actually reaches the philosophical-class threshold the term AGI was originally meant to describe (much further, if ever). The conflation produces hype because the closest meaning is presented as evidence for the further meaning. The closer meaning may well land in the 2027 window. The further meanings are substantially more uncertain.

What would need to be true. For the AGI-by-2027 claim to land in the strong sense (most economically valuable work performed by AI systems by end of 2027), several conditional things would need to be true. The current scaling-and-architecture trajectory would need to continue without hitting limits the consensus has not priced in. The deployment-and-integration friction discussed elsewhere would need to compress faster than the current evidence suggests. The agentic-AI capability would need to mature beyond the rebooking-class shape it has now into the broader workflow-and-judgment shape that economically-valuable work requires. The regulatory-and-trust posture across the major economies would need to accommodate the deployment at scale. Each of these is conditional and not certain.

The DeepSeek result tightens the cost-and-capability side of the question. It does not tighten the other sides. The structural debate going forward should distinguish the closer claims (frontier-benchmark-saturation, narrow-task-economic-replacement) from the further claims (general-economic-replacement, philosophical-AGI), and price each one against its own evidence rather than against the conflated framing the trade press has been running.

Mode-3 register on this: the closer claims are likely. The further claims are conditional. The 2027 timeline is plausible for the closer claims and uncertain for the further ones. Operators planning against the closer claims should plan; operators planning around the further claims as if they are certain are pricing against confidence the evidence does not currently support. The DeepSeek shift made the debate structural enough that the operator-class can now have it productively, with calibrated probabilities against each claim rather than the theatrical framing the prior two years had been running. That is the most useful thing the DeepSeek result did.

—TJ