The AI content problem is not misinformation. It is that operators cannot tell what is real anymore.

The trade-press coverage of the AI-generated content trajectory through 2024-2026 has concentrated on the public-safety dimension. The framing has been that AI-generated content reaching consumers and citizens through social media, news, and adjacent public-information channels is the central concern, with the implications running through misinformation, election integrity, public-trust erosion, and adjacent civic-class topics. The framing is substantively correct on the public-safety dimension and incomplete on the broader operational landscape.

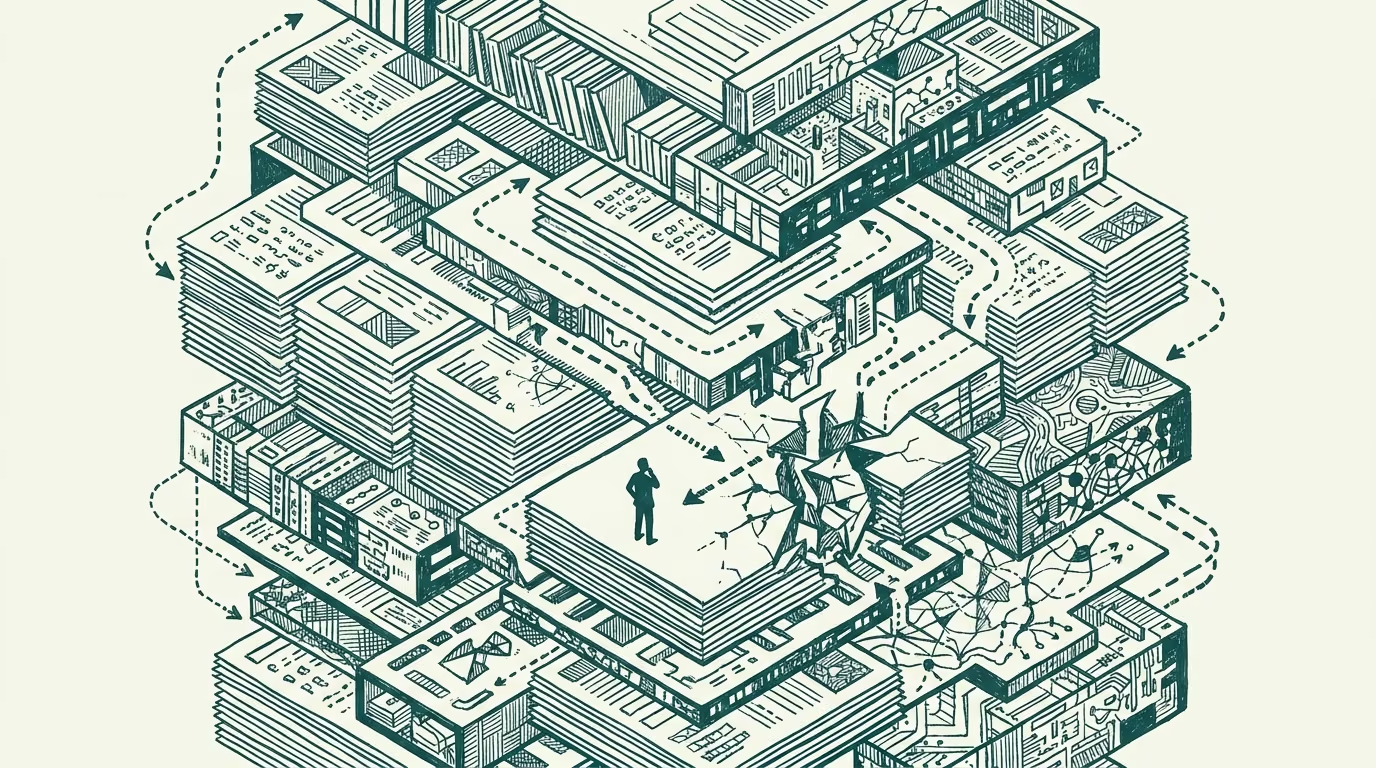

The durable read is structurally different. The procurement decks the operator evaluates, the due-diligence packages the operator reviews, the candidate-evaluation materials the operator screens, the vendor-pitch documents the operator considers, the investor-class artifacts the operator engages with: all of these have been substantially AI-augmented through 2024-2026, with the consequence that the operator-class workflow now operates against a documentary environment where the principal cannot reliably distinguish the AI-generated and AI-augmented content from the original-author content. The trust architecture for operator-level decision-making is breaking from inside the workflow, not from outside through the public-information environment.

This essay walks the inside-the-workflow trust problem, the visible patterns it has produced through 2024-2026, and what the structural read on the next several years should be.

What the inside-the-workflow problem looks like

The operator workflow runs on substantial volumes of documentary content that the operator does not author. Procurement teams evaluate vendor proposals; due-diligence teams review investment opportunities; HR teams screen candidate materials; investor-relations teams produce-and-consume investor-facing documents; legal teams review contracts; the broader category of operator-grade work runs on documentary review at substantial volume.

Through 2022-2024 the operator assumed that the documents being reviewed were authored by the named source, with the AI-augmentation of the authorship being limited and identifiable through the kinds of tells that experienced reviewers could notice. Through 2024-2026 the AI-augmentation became so substantial and so well-integrated that the tells became unreliable, with the consequence that the operator-tier reviewing the documents could no longer reliably distinguish the substantively-author-driven content from the AI-generated content that the named author had reviewed-but-not-substantively-authored.

The visible patterns through 2024-2026 include several recognizable categories.

The first pattern is the candidate-evaluation category. Resumes, cover letters, candidate-essay materials, candidate-portfolio descriptions: all of these are now substantially AI-augmented in the typical case, with the AI-augmentation producing materials that read as polished, well-organized, and substantively-articulated, regardless of the underlying candidate's actual capability. HR screening processes that calibrated against the prior-decade documentary-quality signals produce wrong selection decisions because the documentary-quality signal no longer correlates with the candidate-quality signal.

The second pattern is the vendor-pitch category. Sales decks, technical-spec documents, capabilities-and-references materials: all of these are substantially AI-augmented, with the augmentation producing documents that read as more substantive than the underlying vendor capability supports. Procurement processes that evaluate vendors against the documentary-quality signal produce wrong vendor-selection decisions for the same reason.

The third pattern is the investor-relations category. Pitch decks, financial models, investor-class narrative documents: all of these are substantially AI-augmented, with the augmentation producing materials that read as more rigorous and more thoroughly-developed than the underlying analysis supports. Investment-class diligence processes that calibrate against the documentary-quality signal produce wrong investment decisions.

The combined effect across these categories is that operator decisions made on documentary-quality signals are systematically wrong-direction more often than they were in the prior decade. The principal cannot audit the documents to recover the underlying signal, because the AI-augmentation has compressed the visible variance between substantive-author-driven content and AI-generated content to the point where reliable distinction is no longer feasible at scale.

What the operator class should be doing

For operator-grade observers reading the trust-architecture problem, the practical advice runs along several lines.

The first is to shift evaluation away from documentary-quality signals toward signals the AI-augmentation cannot easily fake. Live-conversation evaluation, working-session demonstrations, reference-checking with substantive technical questions, in-person engagement around the candidate or vendor's substantive capability: these signals produce information the AI-augmentation does not directly provide, and the operators who calibrate evaluation around these signals produce better selection decisions.

The second is to build internal infrastructure for the AI-augmentation-detection work. Several specialty vendors have emerged through 2024-2026 producing detection-and-classification infrastructure for AI-generated content; the infrastructure is imperfect but produces useful screening signals. The operators who invest in this infrastructure for high-stakes decision contexts (executive hiring, large-vendor procurement, investment-class diligence) produce better decision outcomes than operators who do not.

The third is to engage substantively with the trust-architecture problem at the organizational level rather than treating it as an individual-evaluator problem. The trust-architecture work includes documenting which parts of the workflow rely on documentary-quality signals, identifying the substitution-and-augmentation work that the AI-augmented environment requires, and building the operational infrastructure that supports the substitution-work consistently.

What this implies for the broader category

For the next several years, the trust-architecture problem inside the operator workflow is going to compound. AI-augmentation capability will continue to improve. The visible variance between AI-augmented content and substantive-author-driven content will continue to compress. The detection infrastructure will continue to lag the augmentation capability by some structural margin.

The compounding produces a structural shift in operator-grade workflow that the broader trade-press coverage has been generally not surfacing. The shift is from documentary-quality-as-signal to live-engagement-as-signal across the categories of work that depend on the signal. The shift is real, the implications are durable, and the operators who recognize the shift early produce better decision outcomes than the operators who continue to rely on the prior-decade signal architecture.

The AI content problem is not, primarily, a public-safety problem about misinformation reaching consumers. It is an operator workflow problem about the trust architecture for documentary-quality decision-making. The two problems are related and structurally distinct. The durable read should engage with the workflow problem directly rather than treating the public-safety framing as the central concern. The workflow problem is the durable problem. Build for it.

—TJ