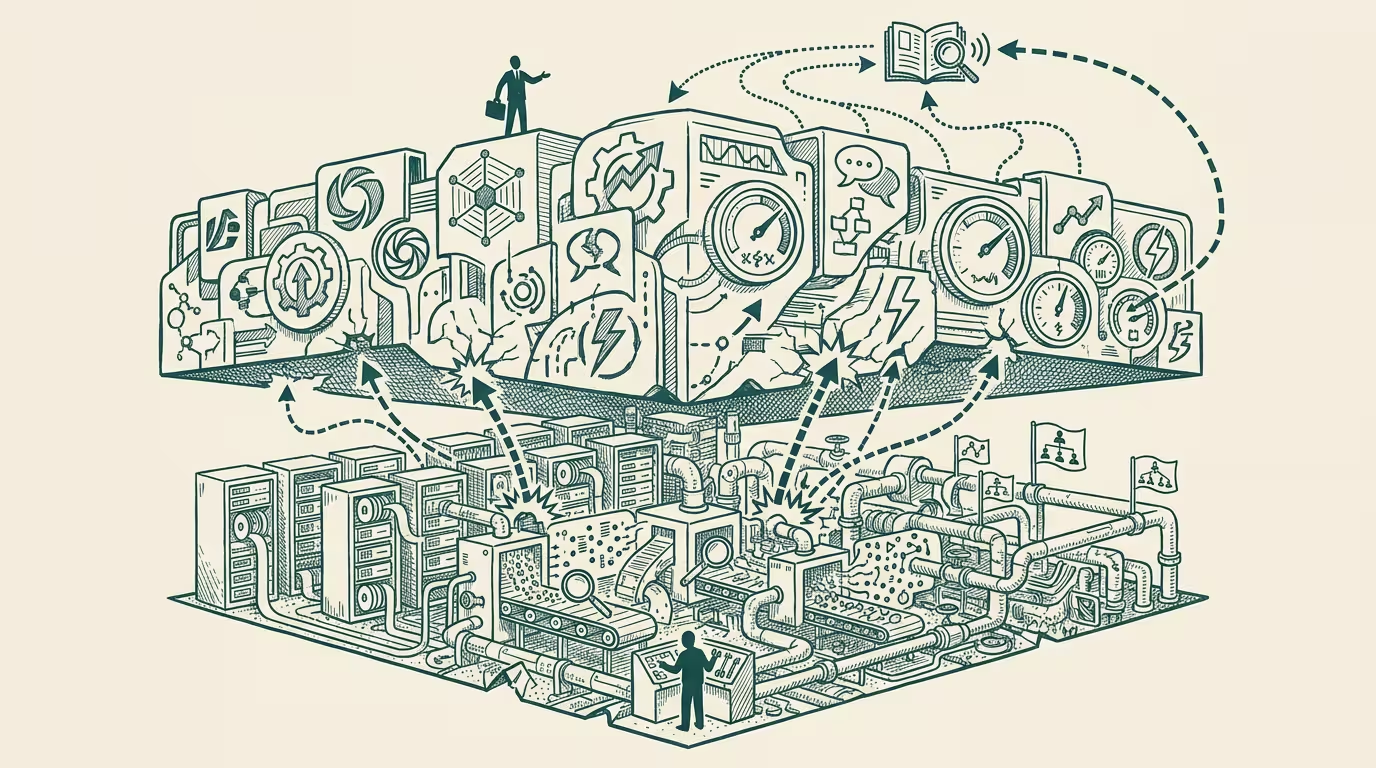

AI deployment is a data-engineering problem in a model-engineering costume.

A long Hacker News thread in late October 2025 dissected the structural conditions under which AI agents fail in production. The thread ran several thousand comments deep across senior infrastructure engineers, ML platform leads, and operator-class deployers. The consensus that emerged is the structural fact vendor marketing still avoids.

_Deployment failures are organizational and data-quality problems, not capability problems._

The capability layer keeps shipping. Frontier models keep getting better at reasoning, tool-use, code generation, multi-step task execution. The deployment layer keeps failing for reasons that have nothing to do with the model's capability and everything to do with the data the model is asked to operate on, the workflows the deployment is calibrated to, and the human-and-system integration discipline the operator class hasn't built yet.

The pricing-and-packaging consequence sorts the AI stack into three categories with very different retention profiles.

Frontier-model providers (OpenAI, Anthropic, Google) compete on capability and pricing. Switching costs are low because models are mostly substitutable through API abstractions. The category absorbs LLMflation directly; pricing compresses on the cost-decline curve. Application-layer products (vertical SaaS with AI features bolted on) compete on workflow fit. Switching costs are moderate because workflow integration is deeper than model substitution but shallower than relationship-class integration. The category compresses through bundle-class commoditization as the larger platforms ship the same features natively. Integration-layer businesses, by contrast, compete on operator-relationship-depth — the work that connects the model to the customer's data, identity, workflow, and compliance posture. Switching costs are high because each integration is calibrated to the customer's organizational specifics, and the calibration compounds across capability cycles. The category is structurally the most defensible in the AI stack.

That sorting reveals where the data-quality layer matters. It's the binding constraint on AI deployment quality, and operators routinely under-invest in it. Customer data in most enterprises is fragmented across legacy systems, partially-structured, partially-enriched, and partially-stale. Deploying AI on top of that data substrate produces deployment outcomes calibrated to the substrate's quality, not to the model's capability. Operators who do the data-quality work first capture deployment quality that operators-who-don't cannot match. The work is unsexy (data cataloging, lineage tracking, quality monitoring, governance discipline) and operationally durable. Capital deployed against data-quality compounds across capability cycles; capital deployed against capability access does not.

What follows from the sort is that operator-class margin in 2026-2028 flows to the integration-layer businesses who solve the human-and-data-plumbing problem. Frontier-model commodity-pricing through LLMflation. Application-layer commodity-pricing through bundle compression. Integration-layer pricing remains durable because the operator-grade work to integrate is non-fungible across customers. Salesforce-class businesses (the integration partners who solve enterprise-class deployment) capture rents that frontier-lab and application-layer competitors don't have access to. The margin profile is what made services-led-software the durable category in pre-2020 enterprise IT; the same dynamic recurs in AI deployment with AI-specific characteristics.

The same wedge generalizes across regulated-category AI deployments. Healthcare-AI integration: solving the EHR data-substrate quality problem is more valuable than the AI capability layer. Financial-services-AI integration: solving the legacy-core-banking-system data-substrate problem is more valuable than the AI capability layer. Government-AI integration: solving the federal-database integration problem is more valuable than the AI capability layer. Each category has its own integration-layer wedge with category-specific moat depth.

What survives all of this is that the late-October HN thread is one of the cleaner 2025 articulations of the AI-deployment-as-data-engineering argument that holds, the integration-layer category is structurally the highest-retention category in the AI stack, and the operator allocation through 2026-2028 should be heavy on integration-class businesses rather than on application-layer products competing on capability differentiation. Operators who recognize the structural shape are positioned for the durable-margin category. Operators who continue to position around capability differentiation are positioning for a margin profile that LLMflation is going to compress.

AI deployment is a data-engineering problem in a model-engineering costume. The integration-layer category is the operator-grade answer. The companies who recognize the costume and build for the substrate are the companies whose 2028 retention metrics are durable. The companies who keep selling against capability are competing in a market where capability is being commoditized faster than they can capture rents from the differentiation.

The unsexy wedge wins. It usually does, in IT history. AI deployment is following the script.

—TJ