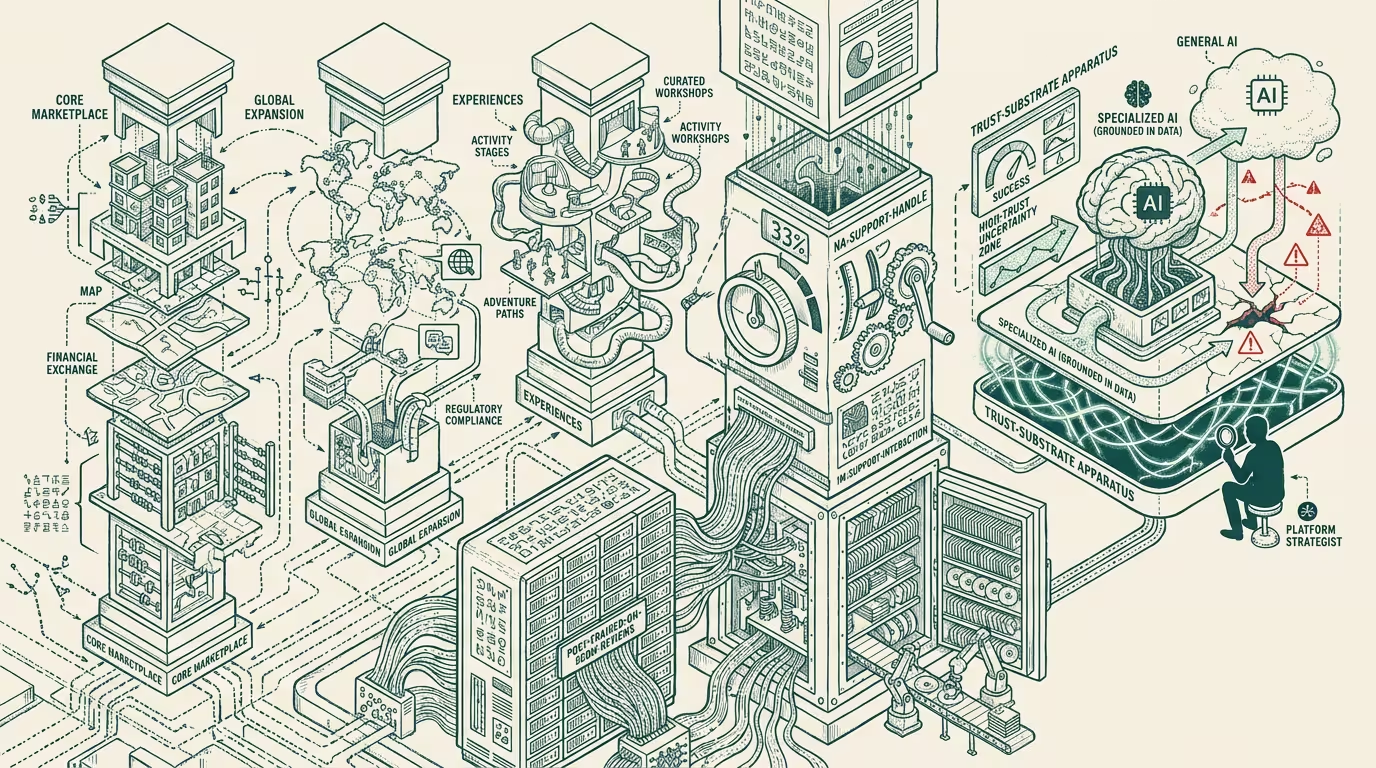

Airbnb made AI the fourth pillar. Specialized beat general where trust was scarcest.

Airbnb reframed its strategic-pillar structure in late 2025 to add AI as a fourth pillar alongside core marketplace, global expansion, and Experiences. The Q4 disclosure that landed with the reframing reported AI handling 33% of North American support interactions without human-agent escalation. The model was post-trained on 500 million reviews and one million support interactions — proprietary domain data that no general-frontier model had access to.

The trade press read it as "Airbnb embraces AI." The structural read is sharper than the headline.

What does the reframing actually represent? Airbnb is the first major travel platform to publicly demonstrate that specialized proprietary AI outperforms general-frontier AI for domain-trust operations, and the demonstration is the template that other trust-scarce-domain operators will run.

Where does the structural advantage live? In the post-training data. _500 million reviews and one million support interactions is a corpus that lives only inside Airbnb's data moat._ A general-frontier model — Claude, GPT, Gemini — has access to public-web reviews at non-trivial volume and to no proprietary support-interaction data. The frontier model can produce travel-related outputs at high quality on general-knowledge tasks. It cannot match Airbnb's specialized model on the specific operating tasks where trust is the binding constraint.

Why is trust the binding constraint? Because the support agent's role is to assess the credibility of competing claims (host vs. guest, damage vs. wear, host-fee dispute vs. legitimate refund), and the assessment requires domain-specific calibration that the general-frontier model doesn't have without post-training. Airbnb's specialized model has that calibration. The 33% no-escalation rate is the structural proof.

Where does the trust-scarce-domain pattern generalize? Beyond travel. Healthcare-AI's trust-scarce-domain is clinical decision support, where the binding constraint is physician-level credibility assessment. Insurance-AI's trust-scarce-domain is claims adjudication, where the binding constraint is fraud-vs-legitimate-claim assessment. Banking-AI's trust-scarce-domain is fraud detection, where the binding constraint is anomaly-vs-legitimate-transaction calibration. Each category has its own version of Airbnb's structural problem, and the structural solution — specialized proprietary AI post-trained on domain data — is the operator-class playbook that recurs across every category.

What's the actual moat? The data, not the AI capability. The general-frontier model will continue to improve at general-knowledge tasks at frontier-lab cadence. The specialized model improves at domain-trust tasks at the rate the operator's domain data accumulates. Airbnb's data moat compounds with every additional review and every additional support interaction. The general-frontier model's improvement curve does not catch the specialized model in the trust-scarce domain because the post-training data the specialized model has access to is structurally unavailable to the general-frontier model. The moat is durable in proportion to the data-collection mechanism's ongoing rate.

What does the 33% disclosure actually signal? Investor-class commitment, not marketing. When a public company reports a specific percentage of operations handled without human escalation, the company is signaling that the AI capability is investment-grade and the cost-structure improvement is durable. Airbnb's disclosure was, in financial-disclosure terms, a forward-looking commitment to the AI-cost-structure trajectory. Operators in adjacent categories reading the disclosure should expect similar cost-structure repricing in their categories within 18-24 months. The investor-class repricing of Airbnb's cost structure is the leading indicator for the category-wide repricing.

The thing that crosses pillars is that the specialized-vs-general-AI question is the strategic question for every operator in trust-scarce categories. Operators with proprietary domain data have the option to run the specialized-AI playbook. Operators without it have to either acquire the data, partner for it, or accept that the general-frontier model is the operating ceiling for their AI deployment. The strategic question is binary: either the company has the data to run the playbook or it does not. The companies that have the data and don't run the playbook are leaving operating leverage on the table. The companies that don't have the data and try to run the playbook are paying for capability that the data moat won't sustain.

What survives all of this is that Airbnb's late-2025 reframing is one of the cleaner public-discourse markers of the specialized-vs-general AI question for trust-scarce domains, the data moat is the actual operating asset (not the AI capability), and the operator-grade discipline is to assess every trust-scarce category for the specialized-AI playbook. By 2027 the categories that ran the playbook successfully will have visible cost-structure advantages over the categories that did not.

Airbnb made AI the fourth pillar. The pillar is the data moat. The AI capability is the surface-layer expression of the moat. Operators who recognize the moat as the asset are operating-coherent. Operators who treat the AI capability as the asset are competing against frontier labs on infrastructure they don't own.

—TJ