Denial works because appealing is expensive. Claimable inverts that.

A managed-care VP of claims operations opens her appeal-volume report on a Tuesday morning in Q2 2026. The number that lands is the appeal-volume increase year-over-year: not 5% or 10% but 800%. The legacy economics of algorithmic denial assumed an appeal-rate ceiling tied to the patient-and-provider effort cost of appealing. Something has changed in that effort cost, and the report on her desk is the operating-evidence that the change has hit the claims-volume layer.

What changed is Claimable. Claimable's AI appeal-generation model launched in 2025 and reached scale through 2026, inverting the cost asymmetry that made algorithmic denial viable.

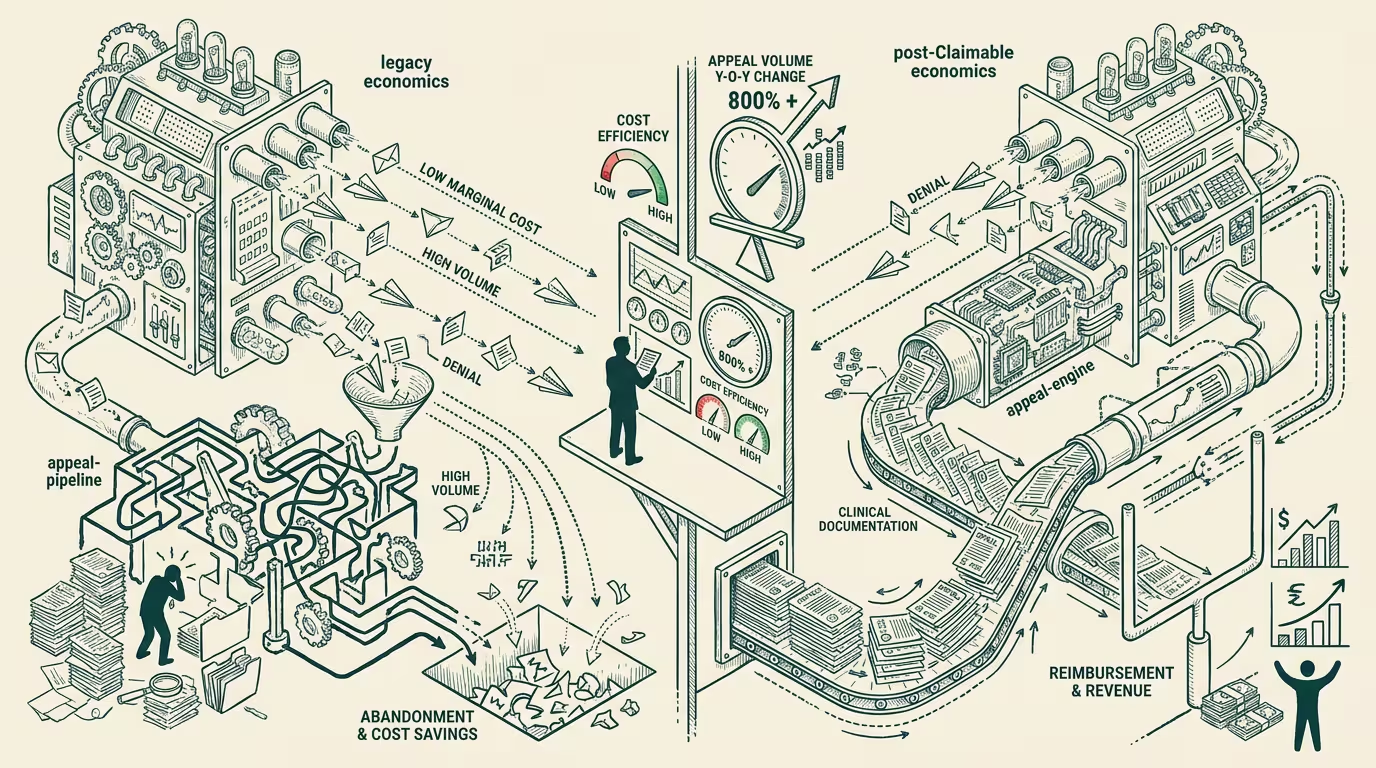

Algorithmic denial works because appealing is expensive. That asymmetry is the structural foundation of the prior-authorization-and-claim-denial economy. Insurers deploy AI to deny claims at machine speed and effectively zero marginal cost; patients and providers face human-class effort and cost to appeal. Most denials don't get appealed because the appeal cost exceeds the expected value of the appeal outcome. The denials stick. The insurer's algorithmic-denial deployment captures the rents.

_The marginal cost of appealing drops toward the marginal cost of denying._ When every algorithmic denial triggers an automated appeal with clinical documentation, the insurer's algorithmic-denial cost rises toward the marginal cost of approval. The arms race equilibrates.

Pre-Claimable: insurer denies at $0.10 per denial, patient appeals at $50-200 per appeal in human time + clinical-staff documentation, expected appeal outcome value $200-1000, only the highest-value appeals proceed, denial economy is asymmetrically profitable for insurers. Post-Claimable (at scale): insurer denies at $0.10 per denial, patient appeals at $1-5 per appeal via AI-generated documentation, expected appeal outcome value still $200-1000, every appeal proceeds, denial economy approaches symmetric cost-structure.

The insurer's response is operationally constrained. Reverting to human-class denial review (slower, more expensive) compresses the algorithmic-denial business model. Continuing algorithmic denial with rising appeal-induced costs compresses margin. Building counter-AI to evaluate AI-generated appeals adds complexity without resolving the cost-symmetry. The arms-race equilibrium favors the patient/provider side, structurally.

What's the operator-class opportunity inside the inversion? Not the appeal-tool itself; it's the denial-appeal exchange data. Claimable's appeal generation is the surface product. The proprietary asset that compounds is the dataset of denial-appeal pairs across payers, denial categories, clinical contexts, and outcome distributions. That dataset is operator-grade signal on payer behavior at granularity no individual provider, no individual patient, and no individual payer can replicate. Pattern detection on the dataset reveals which denial categories are systematically reversible, which payers operate at which appeal-outcome rates, which clinical-documentation patterns optimize appeal success. The dataset is the moat; the appeal generation is the data-collection mechanism.

What's the durable business model inside the opportunity? The infrastructure-layer model — sell the proprietary denial-appeal-exchange dataset to providers, ACO-class organizations, payer-network strategists — is structurally more durable than the per-appeal billing model. Per-appeal billing at $1-5 captures the difference between what the consumer would have paid and the AI's marginal cost; the model is operationally simple but structurally exposed to commoditization (other AI-appeal generators emerge, pricing compresses to AI-cost-plus-margin). The infrastructure-layer model is structurally more durable because the dataset is non-replicable. Operators building toward the infrastructure-layer model are positioning for the durable category. Operators billing per-appeal are positioning for the commoditizing category.

Is the regulatory frame going to constrain the inversion? Not through 2027-2028. State-level patient-rights legislation supports the right-to-appeal. Federal HIPAA frameworks support patient-data-access (the prerequisite for AI-appeal generation). The AMA and other physician-class advocacy bodies are operationally aligned with the inversion (denial-management burden has been a long-standing physician complaint). The regulatory environment is structurally permissive. The window for operator scaling is wide. Insurers may eventually push for regulatory restrictions on AI-appeal generation, but the political-economy class on the patient/provider side is operationally larger and more aligned than the insurer-class lobby on this question.

The same shape recurs across categories where one side has deployed AI to capture rents from the other side's high-friction response. Insurance fraud-detection AI faces consumer-AI counter-tools that automate appeal of disputed flags. Background-check AI faces consumer-AI counter-tools that contest erroneous flags. Employment-AI screening faces candidate-AI counter-tools that contest opaque rejections. Each category has its own version of the asymmetry-inversion arc with category-specific timing and category-specific operator-level playbook.

What survives all of this is that Claimable is one of the cleaner 2026 examples of AI-asymmetry-inversion in healthcare, the infrastructure-layer dataset is the actual operator moat (not the appeal-generation surface), and the regulatory environment is structurally permissive of the scaling through 2027-2028. By 2028 the denial-appeal exchange data will have surfaced patterns that reshape payer-class denial behavior, ACO-class network strategy, and patient-class advocacy positioning. The operator-tier who built the infrastructure layer captures the rents that follow from owning the data.

Denial works because appealing is expensive. Claimable inverts that. The inversion is real, the operator moat is the dataset, and the regulatory frame supports the scaling. Operators reading the inversion as the surface product miss the data-layer opportunity. Operators reading the dataset as the moat are calibrated to the durable category that the asymmetry-inversion arc creates.

—TJ