The fine-tune is dead. Long live the prompt engineer.

The fine-tune is dead. The headline is correct. The headline is also the trap.

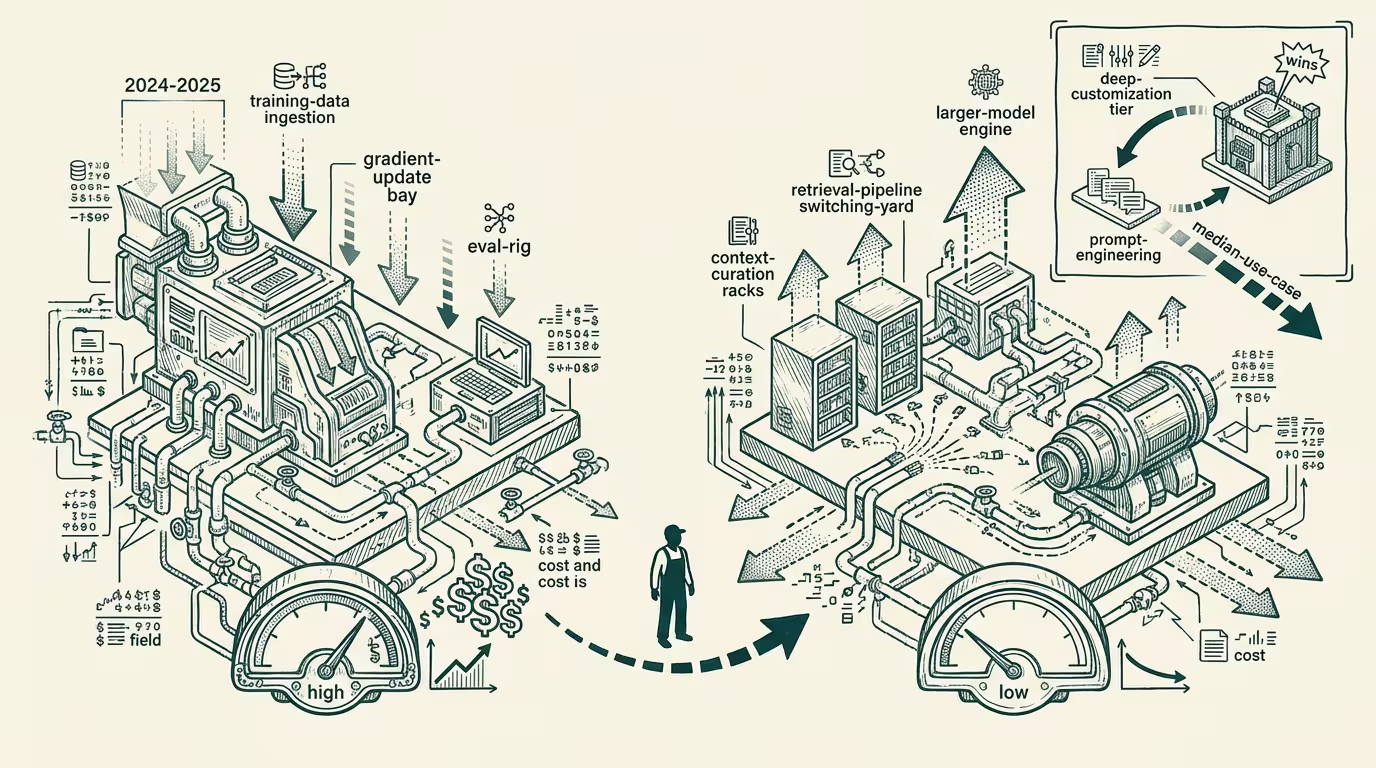

By late 2025 the cost curves had tilted decisively, and the engineering teams that had to make the call already made it. The numbers were not subtle. A team that wanted a customer-service triage classifier could fine-tune a mid-tier open model for somewhere in the low five figures of compute and a few weeks of MLOps work, host it themselves, and pay an inference cost that rounded to nothing per call. Or the team could write a careful prompt against the frontier model, ship the same classifier in a sprint, pay a fraction of a cent per call to a hosted API, and skip the hosting infrastructure entirely.

For the median case, the prompt-engineered version won. It won on time-to-ship. It won on cost-at-volume below a threshold most teams never crossed. It won on capability ceiling, on maintenance burden, and on the speed at which it improved when the upstream model improved. The fine-tuned version won on three things: cost-at-volume above the threshold, latency, and the kind of behavioral consistency compliance lawyers want to see. Three real wins. They mattered for a small minority of use cases. They did not matter for the median use case, and the median use case is what the engineering hours go to.

So the median team stopped fine-tuning. By late 2025 the surveys reflected it. Fine-tuning had become a niche capability. The prompt engineer had become the operator-grade role.

That is the forecast as it stands on the flat-frontier model, and it is correct. It also goes wrong the moment it is read as a permanent claim. It is not. It is a statement about a specific economic moment, defined by a specific set of assumptions about where models live, who runs them, and what the marginal cost of inference looks like. Each of those assumptions is now in motion. When they finish moving, fine-tuning will come back, and it will come back in a different layer than the one that just got vacated.

The vacated layer is the frontier-foundation-model layer. The reason fine-tuning lost there is straightforward: the frontier was moving faster than any fine-tuned snapshot of it could keep up with. Fine-tuning a frontier model is an investment in a particular checkpoint of that model, and that checkpoint depreciates the moment the lab ships the next one. By the time a team had specialized the prior checkpoint to its task, the next checkpoint had arrived with new capabilities the team's specialization could not exploit, and the frontier's general capability gain had erased most of the specialization's task-specific edge. The economics of fine-tuning at the frontier are the economics of running on a treadmill that is speeding up underneath you. Most teams worked it out and stepped off.

The layer about to absorb fine-tuning sits below the frontier. Specifically: the layer of small, specialized models, often deployed at the edge, that do not need to keep up with the frontier because they are not trying to be general. The economics of fine-tuning a small specialized model are different in every meaningful dimension. The model is small enough that the fine-tuning job costs hundreds of dollars instead of tens of thousands. The deployment target is the operator's own infrastructure, often inside a device or a private network, where the cost of a frontier-API round-trip is not just compute, it is latency, privacy, regulation, or unavailability. The capability requirement is narrow enough that the fine-tuned model can compete with the frontier on the specific task even though it would lose on every other task. And the depreciation rate of the fine-tune is much slower, because the substrate model underneath is not racing toward a frontier; it is being optimized for parameter efficiency, inference speed, and quantization to a target device.

The frontier is moving fast. The edge model is moving slowly, deliberately, in a direction that makes the fine-tune more valuable rather than less.

Three categories of work end up in this re-emerging fine-tuning layer in 2026 and 2027, and they are the categories the prompt-engineering era did not solve.

The first is regulated work where the data cannot leave the operator's environment. Healthcare records, financial transaction streams, defense and intelligence workflows, legal discovery, certain industrial telemetry. Sending the data to a frontier API is either non-compliant or strategically unacceptable. The frontier-prompt approach is unavailable. The deployable approach is a smaller model, hosted in the operator's environment, fine-tuned on the operator's data. The economics of the fine-tune do not have to beat the frontier-API economics, because the frontier-API economics are not on the table. They have to be merely viable as an in-environment deployment, which they are.

The second is latency-critical work where the round-trip to a frontier API is the bottleneck. Real-time call summarization where the latency budget is sub-second. On-device transcription and translation where the device may not have connectivity at all. Manufacturing-floor anomaly detection where the response loop has to close inside a few hundred milliseconds. Robotic control loops at any scale. The frontier API can be the most capable model in the world and it does not matter, because the network round-trip is the constraint, and the only way to remove the network round-trip is to put the model on-device. The on-device model has to be small. To be useful at small scale, it has to be fine-tuned on the task.

The third is cost-asymptotic work where the per-call cost gets multiplied by such a large call volume that the threshold-crossing argument the median team did not face does start to bind. High-frequency trading. Programmatic ad bidding. Search-and-rerank pipelines at consumer-internet scale. Content-moderation pipelines at platform scale. The frontier-API price is a fraction of a cent per call. The volume is hundreds of millions of calls per day. The math at that scale always eventually arrives at the same answer: a fine-tuned model that does the specific task at a fraction of the per-call cost will pay back its training run in weeks. The prompt-engineering generation skipped this layer because the volumes were not yet there for the median product. The volumes are getting there. At the next consumer-AI scale step, they will be there for products that do not yet exist.

What that means for any AI infrastructure team thinking three to five years out is that the prompt-engineering victory is real but transitional. The current generation of AI products was built by teams that did not build a fine-tuning capability because they did not need one. The next generation, the ones that are regulated, latency-critical, or asymptotic, is being built by teams that will need one and do not yet have one. The infrastructure stack to support specialized fine-tuning at the edge model layer is underbuilt. The tooling is fragmented. The talent that did this work in the foundation-model era is mostly inside the foundation-model labs. The operator-grade teams that need it most are the ones least equipped to build it. That gap will get filled. By an emerging set of fine-tuning-as-a-service platforms specifically targeting small models on edge deployment, or by the foundation-model labs themselves shipping the tooling top-down, or by an open-source stack maturing fast enough to absorb the demand. The question is which.

So the headline is correct, and the headline is wrong. Fine-tuning at the frontier is dead. Fine-tuning at the edge is the next consequential layer of AI infrastructure, and the operator-grade teams that figure out how to build it before the platforms commoditize it will own the categories of AI workflow the prompt engineer cannot reach.

Long live the prompt engineer. Long live the fine-tune, in the layer where it actually pencils. Both, at once, in different rooms.

—TJ