Intelligence got cheaper. Judgment didn't.

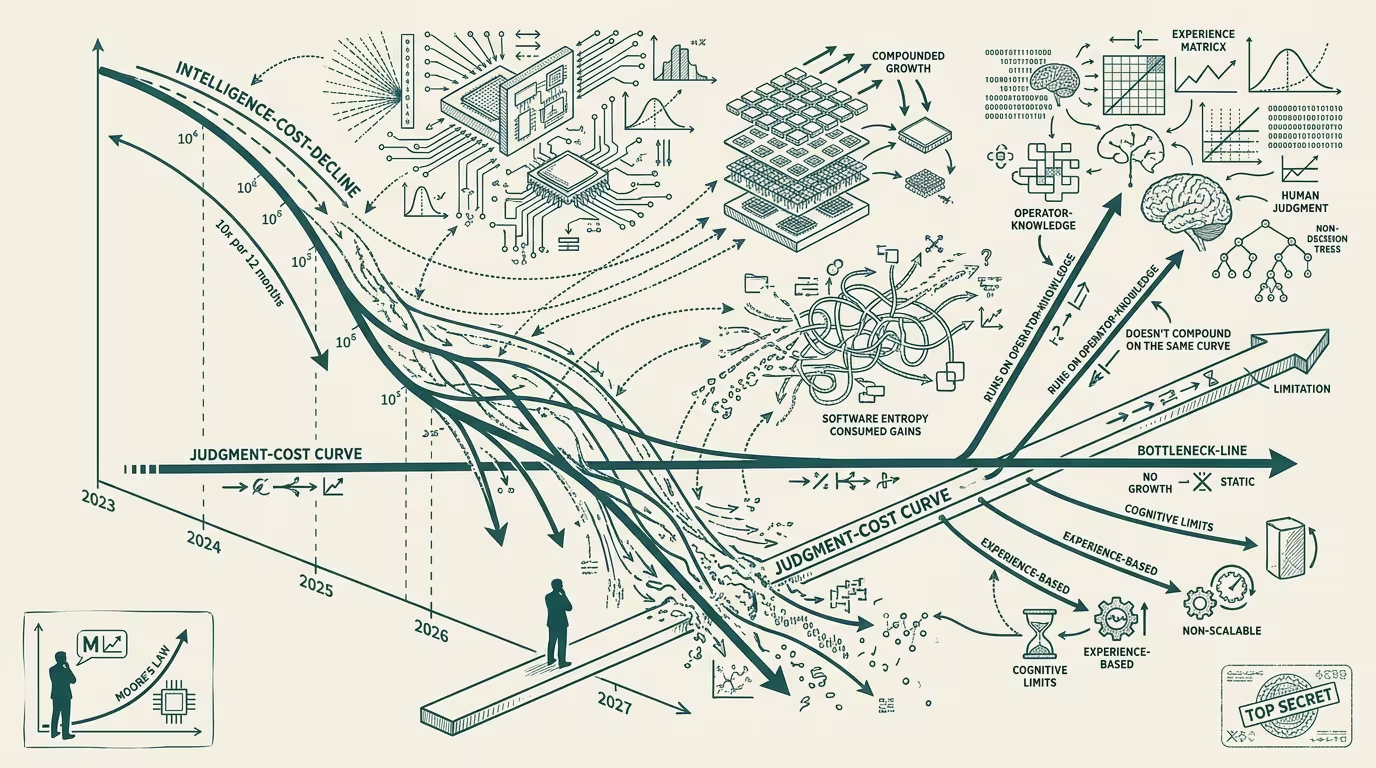

Sam Altman's "Intelligence Age" essay, published September 23, 2024, positions a 10x-per-12-months cost decline as the Schelling point around which enterprise AI investment should organize. The argument is that intelligence becomes a cheap input, applications proliferate, and the operators who deploy aggressively against the cost curve win the next decade.

The Moore's Law analogy carries the same limitation Moore's Law did. Transistor density compounded at 2x every 18-24 months for forty years, and the cost-per-transistor of computation dropped accordingly. Software entropy consumed most of the gains. The 2010 laptop ran software that _used_ the additional transistors without making the user's _task_ meaningfully faster. Word processors that started up in milliseconds in 1995 still take seconds in 2024 because the application gained features faster than the chip gained speed.

That is the pattern. Cost-per-input drops; usage-per-task rises; net cost-per-outcome flattens.

Intelligence is on the same curve.

Inference cost dropped 10x in 2024. The trade-press graph shows it. Altman's essay leans on it. The graph is real. The operator's per-task cost did not drop 10x.The operator's per-task cost rose, in many cases, because the operator started running agentic loops that consume 10-30x the tokens a single-shot prompt did in 2023. The cost-per-input dropped. The usage-per-task rose. The operator's quarterly cloud bill is what tracks the rise.

What the cost-decline graph misses is the load-bearing distinction: intelligence is the cheap input, and judgment is the scarce one.

Intelligence, in the Altman frame, is what the model produces. Judgment is what the operator does with the model's output. The model produces a triage classification. The operator-class judgment is whether to act on it, route to a human, or ignore. The model produces a code review. The operator-level judgment is whether to merge, request changes, or escalate. The model produces a deployment recommendation. The operator judgment is whether to ship, hold, or call the architect.

Judgment did not get cheaper. Judgment got, in operator-tier terms, _more expensive_, because the volume of model-output requiring judgment went up, the cost-per-judgment-event held constant or rose with task complexity, and the operator who scaled model-output without scaling judgment-capacity discovered the bottleneck the year after deploying the cheap model.

This is the thing the Intelligence Age framing does not surface. A 10x cost decline on intelligence does not produce a 10x productivity gain at the operator level, because productivity is downstream of judgment-capacity, and judgment-capacity is human-bottlenecked. The operator who reads the cost-decline as straightforward leverage is the operator whose 2025 plan is built on the wrong assumption. The operator who reads the cost-decline as _changing the cost-balance between intelligence and judgment_ is the operator who plans correctly.

That reframe has real implications.

If intelligence is cheap and judgment is scarce, the right operator move is to invest in judgment-multiplier infrastructure. Decision-support tooling that surfaces the model's output in the form a human judge can act on quickly. Audit-and-override systems that let a senior judge review a junior judge's calls. Workflow architecture that batches model-output for review rather than triggering judgment-events at random moments. The cost of intelligence is the cheap line; the operator's edge is in how much judgment-throughput their workflow can absorb per hour of senior-staff time.

The operators who get this build the next category of AI-deployment infrastructure. The operators who don't keep buying more inference and discover their senior staff are the bottleneck.

thing that crosses pillars is sharper. Healthcare-AI runs into this gap at maximum amplitude because the judgment-event in healthcare is _clinical judgment_, which is regulated, malpractice-exposed, and trained over years. The cost of clinical-judgment-throughput does not drop with model-cost decline. A radiology AI that triples the radiologist's case-throughput does not actually triple it; it triples the model-output, and the radiologist's actual throughput rises by maybe 30-40% because the judgment-step is the bottleneck. The hospital that bought the AI on the throughput-multiplier promise discovers in year two that the multiplier was on intelligence, not on judgment, and the radiologist is still the gating resource.

Travel-AI runs into the same gap. The corporate-travel buyer with an AI-policy-enforcement agent has the AI proposing actions; the buyer's judgment is what allows or denies the proposal. The AI proposes faster than the buyer judges. The buyer queue grows. The deployment fails not because the AI is wrong but because the human-judgment throughput cannot keep up.

Finance-AI runs into it. Compliance-AI runs into it. Every category where the AI's output requires human-judgment review runs into the same bottleneck.

The part that holds is two-part.

Part one: do not plan 2025 budgets on a 10x productivity gain. Plan on a 1.5-3x gain in _intelligence-capacity_, and a much smaller gain in _judgment-throughput_, and an operating model where the judgment-throughput is the load-bearing variable.

Part two: invest in judgment-multiplier infrastructure now. The companies that win the AI-deployment category in 2026-2027 are the companies that build the workflow apparatus that lets one senior judge handle the output of ten model-instances cleanly. That apparatus is not standard-issue. It has to be built. The operator who builds it in 2024-2025 is the operator running the durable AI deployment in 2027.

The honest summary: Altman is right that intelligence is getting cheaper. He is silent on the part that matters more, which is that judgment is not getting cheaper at the same rate, and the operator outcome depends on the gap between the two curves rather than on either curve alone. The Intelligence Age framing is a sales pitch for inference. The durable read is a sales pitch for everything else.

Intelligence got cheaper. Judgment didn't. The operators who confuse them are the operators paying for what they didn't need. The operators who don't are the operators running the categories that scale.

—TJ