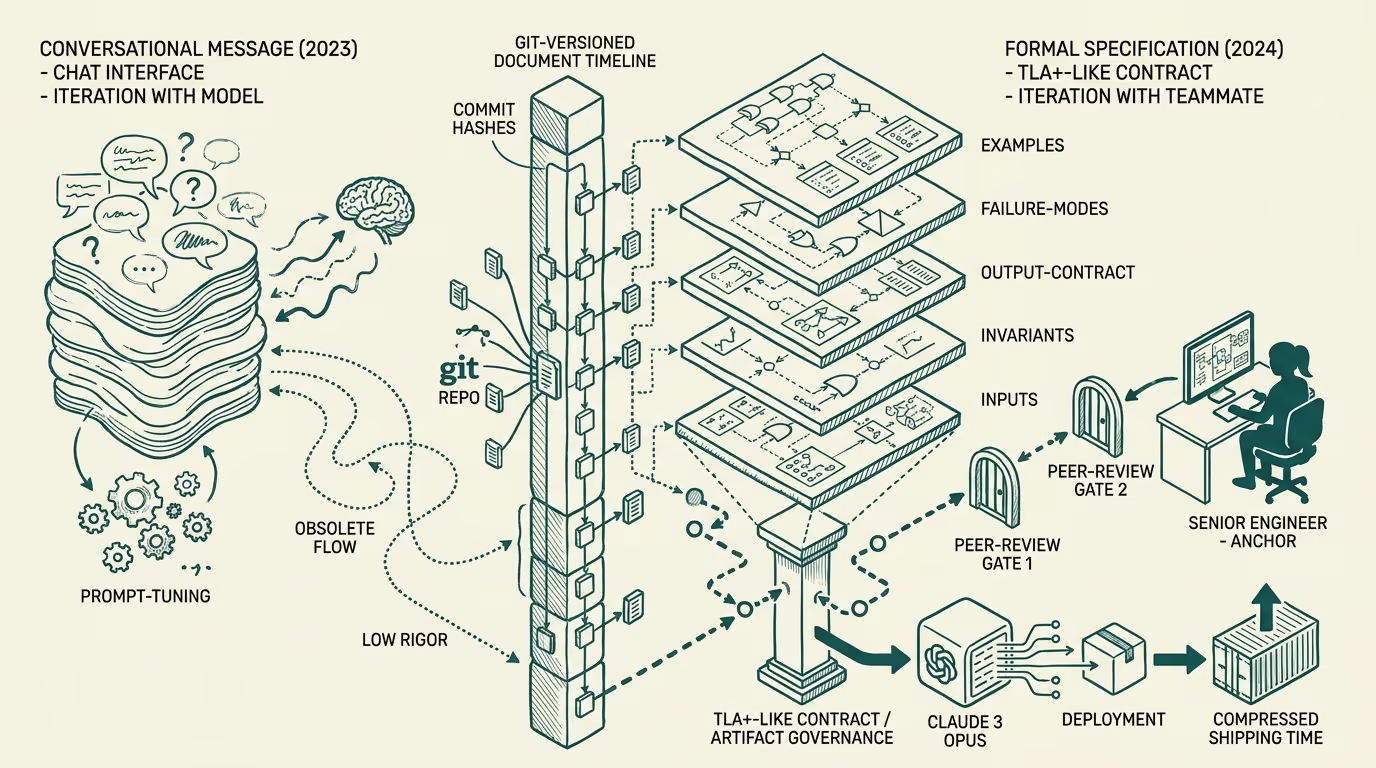

Specs are the new prompt.

The senior engineer in the corner of the office last December was not writing prompts. She was writing a spec. The artifact open on her left monitor, pinned to a `prompts/` directory inside the same git repo as the rest of the codebase, ran 240 lines. Sections labelled INPUTS, INVARIANTS, OUTPUT-CONTRACT, FAILURE-MODES, EXAMPLES. She had a peer-review tag open in another tab. She was not iterating with the model in the way every 2023 piece on prompt engineering described. She was iterating with her teammate.

The team had not changed. The tool had not really changed. What changed was the artifact.

_The prompt became a specification._ Not metaphorically. In the literal sense that the document she was writing carried the same structural commitments a TLA+ spec carries: explicit invariants, named pre-conditions, named post-conditions, a contract on what the implementation must satisfy. The frontier model on the other end of the contract, in this team's stack Claude 3 Opus through their internal proxy, was the implementation. The spec was the spec. Version-controlled. Reviewed. Tested against fixtures the way any other spec gets tested.

By the time I walked through three other production codebases over the autumn, the same shape had emerged in each. The pattern was not a single team's idiosyncrasy. The category had shifted in a way the trade press had not yet named.

This is the case-study walk through that shift, with three concrete before-and-after pairs from teams I worked with directly. The point is not that prompt engineering is dead, in the sense that the discipline of writing precise instructions to a language model went away. The point is that the artifact that holds that discipline got an entirely new shape, and the operating consequences for a team that recognized the shape change in 2024 are non-trivial.

Case 1, healthcare claims-routing team

Before. The claims-routing team was using a Slack bot that wrapped Claude. The bot's behaviour was governed by what they called "the prompt," a string concatenated together inside a Python function in their `routing_logic.py` module. Two engineers had write access. The string was about 6,000 characters. It had been edited 47 times in three months. There was no test for it.

When the model returned a routing decision the team's compliance lead disagreed with, the only way to figure out which version of the prompt had produced that decision was to dig through the git blame of `routing_logic.py` and reconstruct the string at the moment the decision was made. Twice that process took half a day.

After. Late November the same team checked in `prompts/claims-routing/v1.spec.md`, a 380-line markdown document with the spec sections I described above. The implementation in `routing_logic.py` became a 12-line loader that read the spec file at runtime. Every decision the bot returned now carried a header in its response: SPEC-VERSION: f3a9c2b. The spec lived in git. Reviews on changes to it went through the same code-review process every other PR went through. The compliance lead now read the spec directly. When she disagreed with a decision, the conversation was about the spec language. Compliance lead, two engineers, a literal markdown file on a screen.

The fix was not a tool change. The fix was treating the artifact as a spec.

Case 2, B2B onboarding team

Before. The onboarding team had a different problem. They had eleven different prompts running across their product, each tuned by a different engineer, each living in a different file format. One was a YAML inside a config service, one was a Python f-string inside a class definition, three were JSON blobs inside a feature-flagging tool, the rest were scattered. The team had no shared mental model of what the prompts collectively expected the model to do. New engineers landed and had to read all eleven to understand which behavior was where.

A regression in early autumn took two weeks to find. Someone had edited the YAML prompt in the config service to add a new instruction; the instruction interacted in a way no one anticipated with the JSON-flag prompt that ran on the same code path two layers down; the failure mode showed up as flaky test output that was at first attributed to model nondeterminism. Two weeks to root-cause.

After. December close-out: the team committed to a `prompts/` directory at the root of the monorepo. Eleven markdown files. Each carrying the same INPUTS / INVARIANTS / OUTPUT-CONTRACT / FAILURE-MODES / EXAMPLES sections. A small Python module loaded them at runtime by name. Code that previously had inline prompts now imported the spec by reference. Reviews on prompt changes went through the same workflow as code reviews. A new test fixture, `tests/spec_smoke/`, ran each spec against a small set of golden inputs and verified the model's output matched a flexible-grammar regex extracted from the OUTPUT-CONTRACT section.

The two-week regression-hunting episode could not have happened against the new structure. The interaction between the YAML prompt and the JSON-flag prompt was visible at the level of the spec set. The team caught two similar interactions in the audit pass that established the new structure.

Case 3, internal tooling at a medium-sized SaaS company

Before. The tooling team built an internal copilot that helped engineers across the company write better commit messages, scope PRs, and run release-note generation. The tool was popular. The tool was also a black box: the engineer who built it had moved teams, the prompt was hardcoded inside a long Python function, and no one else on the new owning team felt comfortable changing it. The product became one of those organizational artifacts that everyone uses and no one maintains.

After. The new tooling lead spent two weeks on an inverse-engineering pass. They extracted the prompt from the Python function. They factored it into a spec with the same five sections. They wrote down what the team understood the tool was supposed to do. The spec turned out to disagree, in two specific places, with what the team had been telling new engineers about the tool's behaviour. The disagreement surfaced because the spec form forced the team to write it down.

The lead committed the spec, marked it `v1.0`, and the team now had a shared model of what the tool did. Three weeks later, the spec moved to `v1.2` after a real conversation about whether the tool's behaviour on certain edge cases was the right behaviour. That conversation could not have happened against the old artifact, because the old artifact was not legible enough for the conversation.

What changed at the artifact level

In all three cases the move was the same. The team stopped treating the prompt as a string and started treating it as a document. The document had structure. The structure was reviewable. The structure was testable. The structure was version-controlled.

The implications compounded. Once the artifact was a document, the team could:

- Diff prompt changes the way they diff code changes. - Review prompt changes through the same workflow as code reviews, with named reviewers. - Test prompts against fixtures that lived alongside the spec. - Migrate between models by re-running the test fixtures against the new model. - Audit the prompt history when a regulatory or compliance question landed. - Onboard new engineers by handing them a directory of specs to read.

None of those affordances existed at the level of "the prompt is a string inside a function." All of them existed at the level of "the prompt is a markdown file with structure." The artifact change unlocked the operating affordances; the affordances unlocked the team's ability to ship faster on a foundation they trusted.

What this looks like by the end of 2025

The teams I have walked through that recognized the shift in early 2024 are six to nine months ahead, by my read, of the teams that have not. Concretely: they ship prompt changes through the same review process they use for any other behavioural change. They have prompt-fixture test runs in CI. They have versioned spec files referenced by SHA in their model-output logs. They have a mental model of "this is what the model is supposed to do" that is legible to product managers, compliance staff, and the rest of the engineering org, not just to the engineers who originally wrote the prompt.

By the end of 2025, the teams that did not make the shift are still treating their AI features the way they treated them in 2023: as opaque, hard to maintain, prone to silent regressions, dependent on the institutional knowledge of one or two engineers. The cost of maintaining those features will rise as the surface area grows. The cost of the spec form is paid up front, once, and it amortizes.

The operating call

If you are running an AI feature in production at any scale, the call is straightforward. Treat the prompt as a spec. Give it the structure a TLA+ spec has, or a YAML schema has, or any other artifact-with-contract has. Put it in git. Review it. Test it. Version it. Stop calling it a prompt at the moment your team's mental model of it shifts toward "this is the contract the model satisfies." Start calling it a spec.

The case studies above are not edge cases. They are the early signal of where the category lands. Specs are the new prompt. The teams that recognize that ship; the teams that do not become the case study three years from now of an AI deployment that aged the way most legacy code ages, slowly, expensively, and through one engineer at a time.

—TJ