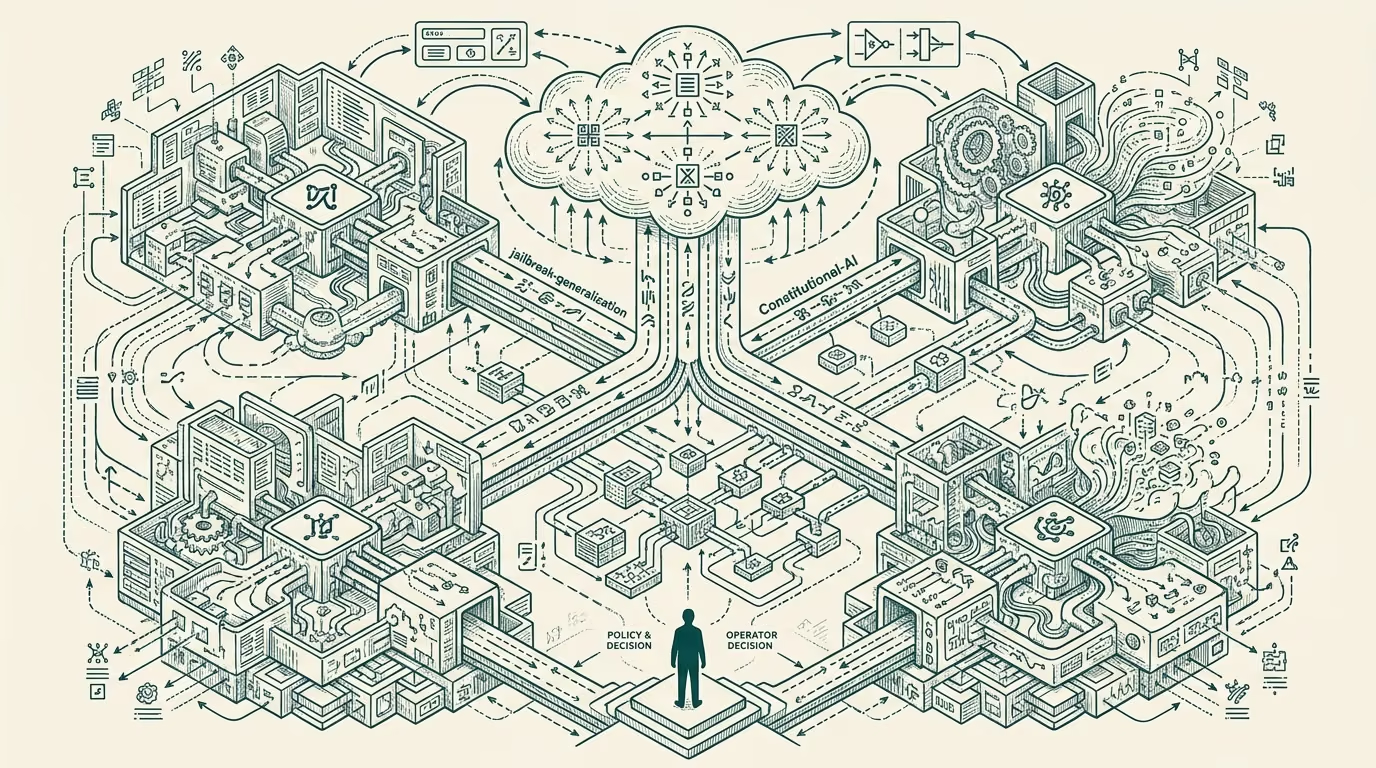

What the safety teams got right in 2024 shows up in the Claude Constitution and the 36-AG pushback.

The AI safety research community at the major foundation-model labs (Anthropic, OpenAI, Google DeepMind, plus the various academic-and-third-party institutions doing aligned research) had a more accurate predictive track record through 2024 than the broader discourse credited. The trade-press and venture-class commentary through 2024 often framed the safety teams as overly-cautious or doom-oriented, with the implication that the teams' concerns were academic rather than operationally relevant. The operational record produced in 2024 surfaced several concerns the safety teams had flagged early, with the corresponding consequence that the dismissive framing was less accurate than the framing implied.

This retrospective walks what the safety teams got right in 2024, what they got wrong, and what the part that holds on the next year of safety-research-and-policy engagement should be.

What the safety teams got right

The first specific call the safety teams got right was on jailbreak generalization. Through 2023-2024 the safety research community had been flagging the risk that adversarial prompts which jailbreak one model would generalize across other models, with the consequence that defending against jailbreaks at the model-vendor level required ongoing investment rather than one-time hardening. The 2024 deployment data validated the concern, with publicly-disclosed jailbreak techniques transferring across vendor offerings at substantial rates and with the model-vendor-class needing to ship sustained mitigation work to address the surface.

The second specific call the safety teams got right was on prompt-injection-at-scale. The community had been flagging through 2023 that prompt injection (where adversarial content embedded in retrieved documents or tool-use outputs hijacks the model's instruction-following) would scale into a substantive operational problem as agentic deployments matured. The 2024 deployment record validated this concern as well, with several visible incidents and the broader adoption of mitigation infrastructure that the safety teams had been advocating for.

The third specific call the safety teams got right was on evaluation-suite contamination. The community had been flagging that benchmark-and-eval suite contamination (where the test set leaks into the training data, producing inflated benchmark scores that do not reflect actual capability) would become a meaningful problem as the foundation-model training scale increased. The 2024 evaluation-research record produced multiple visible cases of contamination, with substantial methodological work to detect and mitigate the problem now being a standard part of evaluation infrastructure.

The combined record on these three calls is meaningful. The safety teams identified three operationally-significant concerns early, the broader discourse generally underweighted the concerns at the time, and the deployment record produced exactly the operational problems the safety teams had flagged. The teams also produced the mitigation work that the broader category eventually adopted.

The Claude Constitution work, the various transparency-and-evaluation publications across the labs, and the 36-state-attorney-general pushback against the broader-deployment policy direction in late 2024 are all visible expressions of the safety-team work landing in the public regulatory-and-policy environment. The work is not just academic; it is shaping the operational and regulatory framework the broader category runs against.

What the safety teams got wrong

The safety teams also got specific things wrong, and the retrospective should attend to these symmetrically.

The first thing the safety teams got wrong was on the timeline-and-magnitude of certain capability concerns. Several visible safety-research papers through 2023-2024 projected capability development on timelines that the actual development trajectory did not match. The capability did not arrive on the projected timeline in some cases, did not arrive in the projected magnitude in other cases, and arrived in a different shape than the safety-research framing had implied in still others. The discourse framing of safety-teams-as-doomers was inaccurate in the broad characterization but had a kernel of accuracy in the specific timeline-and-magnitude projections that did not validate.

The second thing the safety teams got wrong was on the policy-and-regulatory framing of certain concerns. Several specific policy positions the safety community advocated for through 2023-2024 produced regulatory reactions that did not address the underlying technical concerns and that produced operational friction without producing safety benefit. The advocacy work, while motivated by genuine concerns, sometimes produced policy outcomes that the safety community itself acknowledged were not the optimal expression of the underlying safety motivation.

The third thing the safety teams got wrong was on the operator-class engagement. Through 2023-2024 the safety research community sometimes engaged with the operator-grade deploying AI products in ways that produced friction rather than collaborative improvement. The communication framing, the technical specificity of the concerns, and the practical-deployment guidance that operators needed were sometimes mismatched with what the safety community produced. The mismatch produced the discourse framing of safety-teams-as-disconnected-from-deployment, with the framing being partly inaccurate and partly an indictment of the engagement quality the safety community had been producing.

What this implies for 2026

For operators reading the safety-research engagement in 2026, the practical advice is to engage with the safety community as a substantive technical partner rather than as either an adversary or as a check-box compliance requirement. The safety teams have demonstrated calls that the operator would have benefited from heeding earlier; the operators who have been engaging substantively with the safety community have produced more durable products than the operators who treated the engagement as adversarial.

For investors evaluating AI investments in 2026, the read is that the safety-engagement quality of an investment is a substantive due-diligence dimension that the broader investment-class has often under-weighted. Companies whose safety posture is rigorous and whose engagement with the safety community is collaborative produce more durable products and face fewer of the regulatory-and-deployment failures that surfaced through 2024.

For policy-class engagement, the read is that the safety community's track record warrants substantial weight in the policy-shaping work, with the recognition that the community has been right on technical concerns more often than the broader discourse credited and has been imperfect on the policy-and-regulatory-framing translation work. The combined record supports continued substantive engagement with the safety community on technical questions, with the specific policy translations being negotiated against the operational realities the operators face.

The safety teams got more right in 2024 than the discourse credits. The specific things they got wrong should be acknowledged symmetrically. The combined record supports a more substantive operator-level engagement with safety research than the dismissive framing has been producing. Build accordingly.

—TJ