The synthetic-data debate is a distraction.

The synthetic-data debate that has been running through the AI conversation for the last six months is a distraction. The Shumailov-et-al “Curse of Recursion” paper from mid-2023 was a useful piece of work that showed something narrow and real: a model trained recursively on its own outputs degrades. The paper has been read, in the commentary that followed, as showing something much wider and not actually true: that synthetic data is a category-level dead end and that the foundation models will inevitably hit a wall when they exhaust the supply of human-written training data. This wider claim is the distraction. It is not the actual constraint that the labs are operating under, and it is not the question that is going to determine which models look strong in 2025 and 2026.

First, the empirical point. The Shumailov result requires a particular setup: pure recursive training without any data curation, without any quality filtering, and without any mixing-in of human-written samples. None of the production foundation-model labs train this way. Anthropic, OpenAI, Google DeepMind, Meta, Mistral, and the major Chinese labs all use heavy curation pipelines in which synthetic data is one input among several, is filtered for quality before being incorporated, and is mixed with human-written data in ratios that the academic-paper model-collapse setup did not test. The production training setup is structurally different from the experimental setup that produced the alarming result. The conclusion of the alarming result does not directly transfer.

Second, the conceptual point. The implicit framing of the synthetic-data debate is that data has a binary quality (organic versus synthetic) and that the organic-data well is finite. Both halves of this framing are wrong. Data quality is not binary; it is a continuous distribution that the curation pipeline slices in ways that have very little to do with whether the source is a human writer or another model. A poorly curated batch of human-written internet text (which is most of the internet) is much worse training data than a carefully curated batch of model-generated reasoning traces. The labs that have figured out how to extract signal from heterogeneous sources are doing so already, and the model-quality numbers from late 2023 onward reflect that work, not any inherent advantage of organic-over-synthetic.

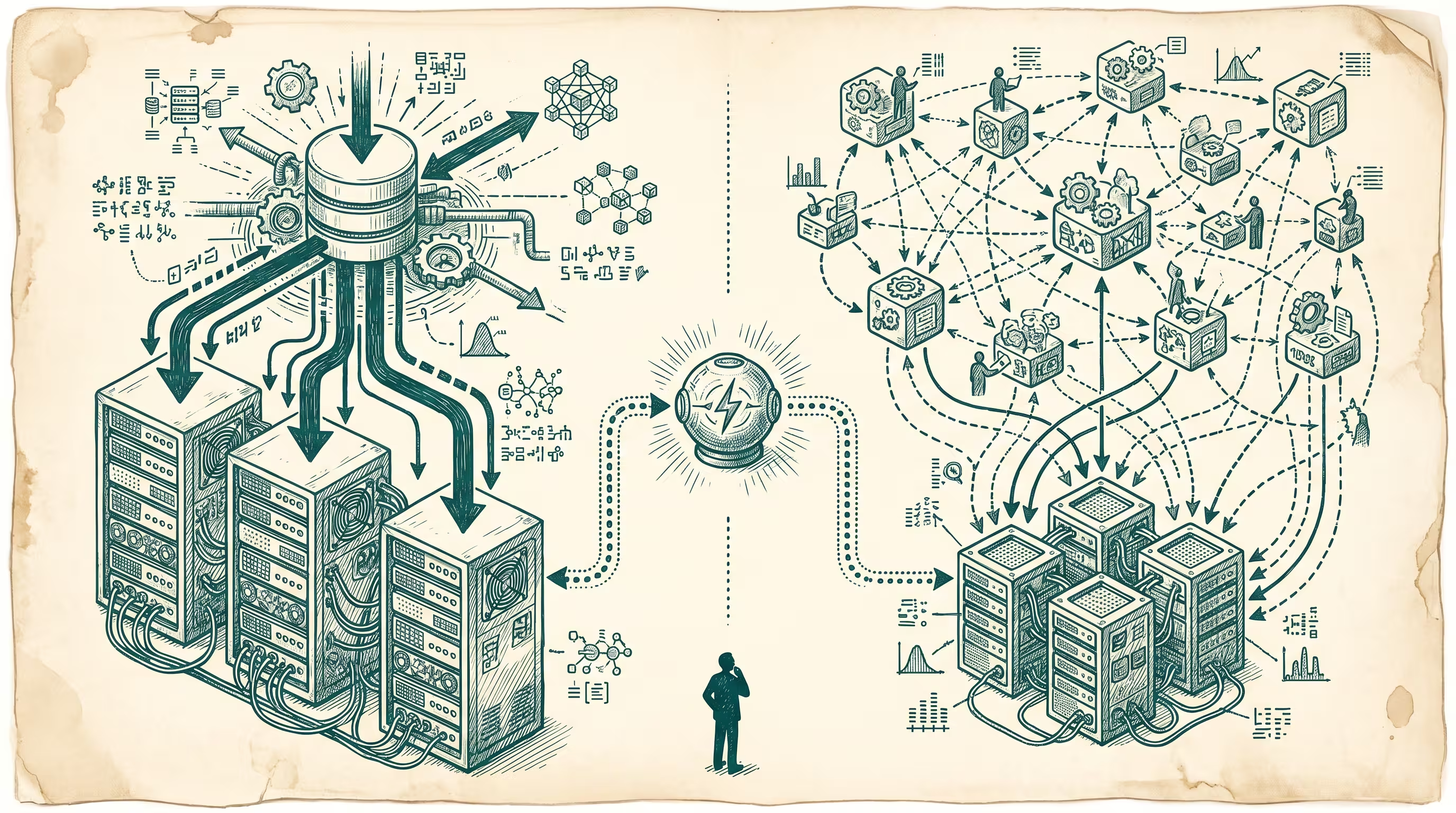

The actual debate that mattered in early 2024, and that the synthetic-data conversation was obscuring, is the debate about distributed-versus-centralized data curation. The labs have, broadly, two strategies. One is to build curation pipelines internally, hire annotators and curators directly or through contracts (Scale AI, Surge, Toloka, Invisible Technologies are the named vendors), and treat the curation pipeline as a moat. The other is to push curation to the edges (community-built datasets like LAION and the open-source post-training datasets, Common Crawl curation work, and the various academic and civic-tech efforts at structured-public- data release) and treat the curation pipeline as a public good. The first strategy is winning at the frontier-model layer in early 2024. The second strategy is winning at the specialized-and-domain-model layer. The interesting question is whether either strategy dominates the other on a five-year view.

The reason the centralized strategy is winning at the frontier is the same reason it is winning everywhere else: the frontier-model labs have the capital to spend roughly five hundred million to one billion dollars per model on annotation and curation alone, and that spending compounds in the model weights in a way that community-built datasets cannot match on the same timeline. The reason the distributed strategy is winning at the specialized layer is that domain-specific curation requires domain-specific judgment, and the people with that judgment are not available to a frontier-model lab on the timeline a frontier-model lab operates on. A medical-imaging dataset built by a radiology consortium is, on the actual radiology task, more useful than a frontier-model lab’s general- purpose curation effort against the same pixels.

The five-year view that I want to push back on the synthetic-data narrative with is something like this. The frontier labs will continue to win at the frontier-model layer through the late-2020s, and the synthetic-data question will not be the binding constraint on their progress. The binding constraints are compute (where Nvidia’s margin and TSMC’s capacity matter more than the data question), reasoning scaffolding (where the curation of reasoning traces and the verification rubric matter more than where those traces came from), and post-training alignment (where the curation of preference data matters more than the scale of pre-training data). The frontier-model layer will not hit a synthetic-data wall. It may hit a compute wall, a power-grid wall, an alignment-evaluation wall, or a regulatory wall, but the data wall is downstream of the curation pipeline, which is in turn downstream of the capital and the operational discipline of the lab building it. The labs that win are the labs that have figured out how to curate; they are not the labs that have hoarded the largest pile of human-written text.

At the specialized-and-domain-model layer, the more interesting question is whether the open-source and domain-consortium efforts can sustain the curation discipline in the absence of the capital that the frontier labs spend. The pattern from late 2023 (the rapid rise of small specialized models trained against carefully curated domain datasets, often from medical or scientific consortia) suggests that the answer is yes, on at least some domains, and that the long-tail of AI value in 2026 and 2027 will be captured by these domain-curation efforts at a higher percentage than the synthetic-data narrative would predict. The frontier models will remain the largest singular capability surface; the specialized models, in aggregate, will inevitably represent more of the deployed-AI economic activity than the frontier-model narrative tracks.

The reframe I would offer for anyone reading the synthetic-data takes in early 2024 is this. The takes are answering a question that is not the binding question. The binding question for the labs is whether the curation pipeline can keep producing high-signal training data on a schedule that matches the model scaling curve. The binding question for the operators is whether the specialized-domain-model layer is the right place to build, given that the frontier-model layer is structurally going to be a winner-take-some market dominated by labs with capital that no operator can match. The synthetic-data debate is talking about neither of these. It is talking about a paper that showed one specific failure mode under one specific setup, generalized into a claim about the category that the underlying paper does not actually support. The takes will fade, the way most AI-cycle takes fade. The actual constraint, the curation-pipeline capital gradient, will keep operating in the background, and the labs that have built the right pipeline will keep compounding against the labs that have not.

—TJ