The eval is the product. The Texas AG healthcare AI settlement is the consequence of ignoring that.

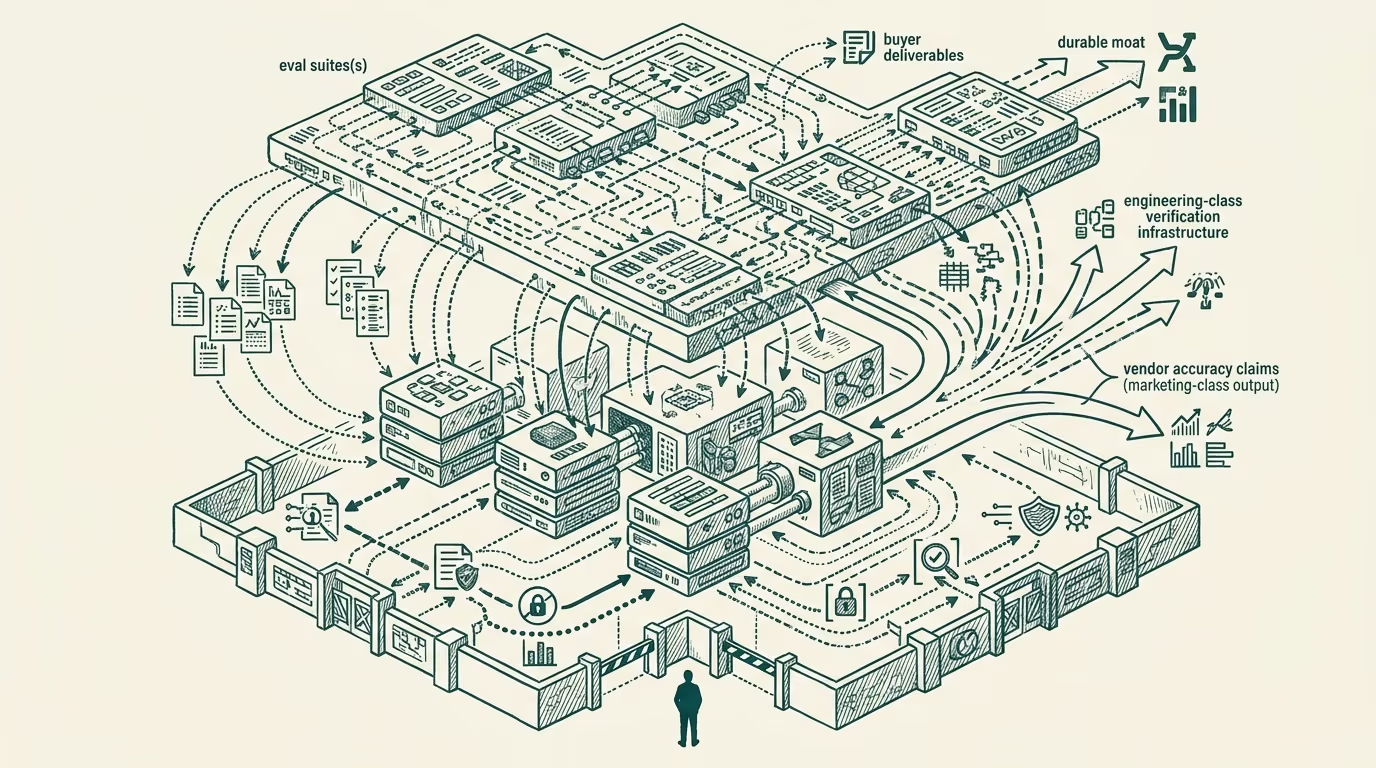

The thesis the operator-class working in production-AI deployment has been making since at least 2018 has, finally, landed in public regulatory discourse. The eval suite is where the moat lives, not the model weights. The model is, increasingly, a swap-in commodity. The eval-and-validation infrastructure is the durable engineering investment that determines whether a production AI deployment is reliable.

The 2024 Texas Attorney General settlement against the healthcare-AI vendor that had over-claimed accuracy was the public regulatory consequence of ignoring this thesis. The vendor's accuracy claims were not supported by the eval-and-operational-data underneath; the marketing-class output ran ahead of the engineering-class verification; the regulatory action was the predictable consequence. The settlement is one example. The pattern is broader.

Three concrete examples from late-2024 production deployments demonstrate the eval-as-product framing in operation. Each one shows the eval suite being the deliverable artifact the buyer pays for, with the underlying model treated as substitutable infrastructure. The shape generalizes across categories.

The healthcare deployment with the swap-able model

The first example is a major U.S. health system deployment of an AI-augmented clinical-decision-support tool through 2024. The vendor's contract with the health system specified the eval suite the vendor would maintain, the performance thresholds the model would meet against the eval, the cadence of eval updates as the deployment context shifted, and the rollback infrastructure that triggered if the eval performance degraded below threshold. The contract did not specify which model the vendor would use. The model was treated as substitutable infrastructure that the vendor could update or replace without notice, provided the eval performance held.

The deployment ran through 2024-2025 with the vendor swapping the underlying model at least twice (initially a foundation-model-vendor offering, then a specialized-fine-tune, then a different foundation-model-vendor offering as the cost-and-performance trade-offs shifted). The buyer's clinical operations were not affected by the swaps because the eval-and-performance contract was the actual operational specification. The model was infrastructure; the eval was the product.

The vendor's competitive positioning in the broader category-of-similar-tools was anchored on the eval-suite quality and the validation infrastructure depth, not on the specific model in use at any given time. Other vendors selling against the buyer faced the eval-comparison question, with the buyer evaluating their products against the deployed eval-suite as the relevant benchmark.

The financial-services deployment with the explicit-substitution clause

The second example is a major U.S. payer deployment of an AI-augmented claim-routing tool through 2024-2025. The vendor's contract with the payer included an explicit-substitution clause: the vendor was authorized to substitute the underlying model with an alternative model from the vendor's approved-substitution list, with no per-substitution buyer-approval required, provided the eval-suite performance was maintained.

The clause was negotiated to give the vendor flexibility to optimize cost-and-performance over time without renegotiating the contract for each model change. The clause's existence in a major payer's procurement contract is the visible signal that the eval-as-product framing has reached the contract-class layer, where it produces durable economic consequences.

The vendor's pricing and the buyer's evaluation framework both operated against the eval-suite as the central artifact. The model itself was not the focus of either side's evaluation. The pattern is the financial-services version of the healthcare pattern, with the same structural reading: the eval is where the moat lives.

The legal-tech deployment with the model-portability clause

The third example is a legal-technology vendor's deployment to a major U.S. law firm through 2024. The vendor's contract included a model-portability clause that obligated the vendor to maintain the same eval-and-performance characteristics if the buyer-side compliance-or-regulatory environment required the vendor to swap the underlying model (for example, if the law firm needed to comply with data-residency requirements that the current foundation-model-vendor's offering did not meet, the vendor was contractually obligated to migrate to an alternative foundation-model that did meet the requirements, with the eval-suite performance maintained through the migration).

The portability clause demonstrates that the eval-as-product framing handles the downstream-compliance complexity that the buyer-class faces. The model is, again, substitutable infrastructure. The eval suite is the durable specification of what the deployment does, with the underlying model being the implementation detail that can be adjusted as the operational environment shifts.

What the framing implies for hiring

For operators building production-AI products in 2025-2026, the eval-as-product framing has substantial hiring implications.

The engineering talent that produces durable competitive advantage is the talent that builds high-quality eval suites, validation infrastructure, regression-detection mechanisms, and the broader operational-quality posture that supports the eval-as-product positioning. The hiring market in 2024-2025 has been concentrated on talent that builds models, prompts, and the broader engineering-of-the-AI-output-itself. The hiring market needs to shift toward talent that builds the engineering-of-the-AI-evaluation, which is structurally a different skill profile.

The product-management talent that produces durable competitive advantage is the talent that thinks about the buyer's eval requirements, the long-cycle validation cadence, the contractual structure of the eval-as-product positioning, and the broader product-engineering of the eval suite. The current product-management market is not adequately calibrated for this skill, and operators hiring against the current calibration will produce gaps that show up in the deployment-and-customer-success outcomes downstream.

The leadership-class talent that produces durable competitive advantage is the talent that recognizes the eval-as-product framing and structures the company's investment, contracts, and competitive positioning against it. The leadership market is also not yet calibrated for this. Companies whose leadership treats AI as a model-vendor relationship rather than as an eval-and-validation engineering investment are structurally exposed to the consequences the Texas AG settlement made public.

Why the framing matters now

The framing matters now because the regulatory environment, the buyer-class procurement-and-diligence sophistication, and the broader category-maturity have all shifted to make the eval-as-product framing the dominant operational reality. Vendors who have been operating against the eval-as-product framing through the 2018-2024 era are well-positioned. Vendors who have been operating against the model-as-product framing are running into the structural consequences in 2024-2025 and will continue to face them through 2026-2028 as the regulatory and procurement environments continue to shift.

The Texas AG settlement was the public consequence at one specific vendor. The pattern is broader. Operators reading the pattern carefully will adjust their hiring, their product strategy, and their contractual positioning to align with the eval-as-product framing. Operators who do not will continue to absorb the consequences as they surface in regulatory actions, lost deals, and customer-trust incidents.

The eval is the product. The model is infrastructure. The framing is correct, durable, and increasingly load-bearing in the operational reality of the AI deployment category. Build accordingly.

—TJ