The model card no one reads is now the governance document everyone will require.

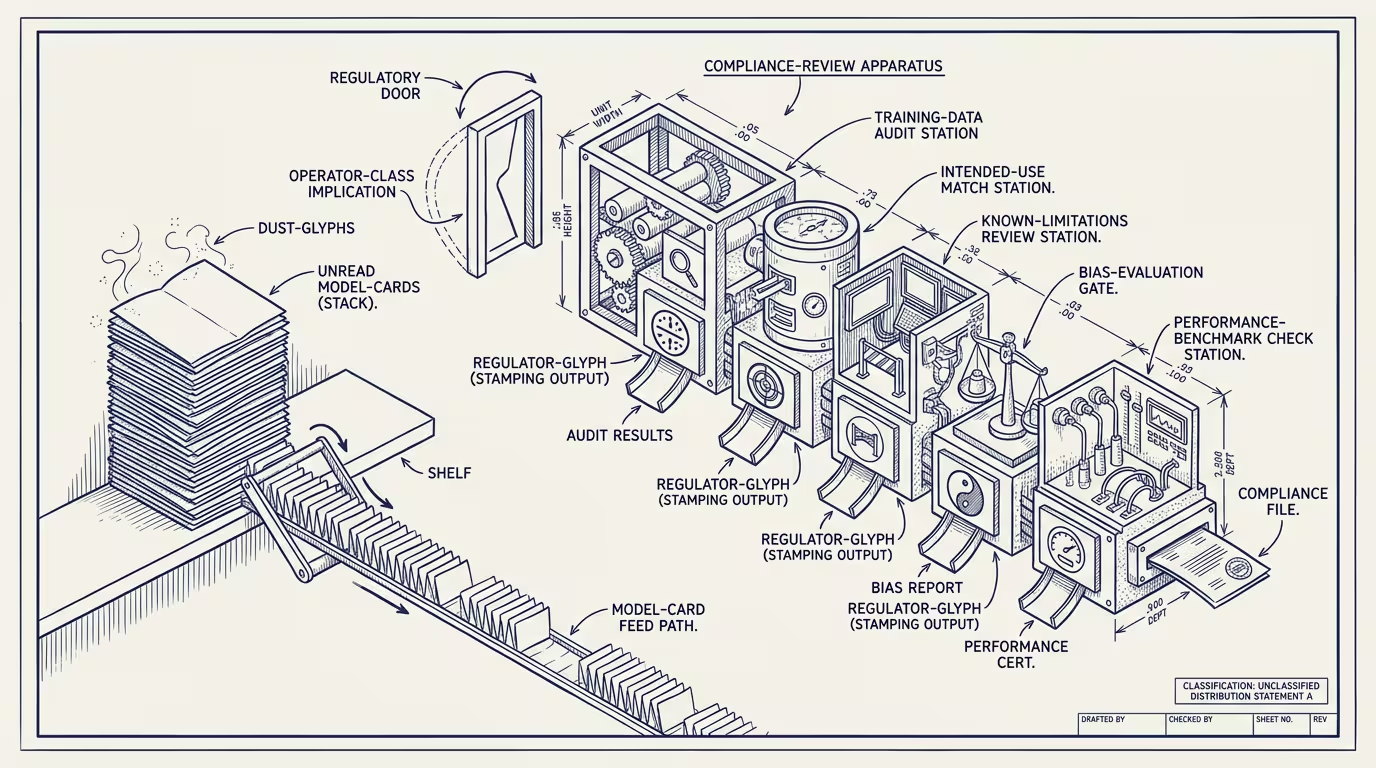

Model cards are, in late 2024, a compliance artifact almost no one reads. The model-card concept landed in 2018 as a research-community proposal: a structured document accompanying every released model, naming the training data, the intended use, the known limitations, the bias evaluations, the performance breakdowns. The labs adopted the format. They publish model cards. The model cards exist.

Almost no one reads them.

The customer who deploys a frontier model in production rarely reads the card past the first page. The procurement officer who signs the vendor contract has not seen the card. The compliance team that owns the AI-policy framework gets the card's existence ticked off in a vendor-due-diligence checklist and does not read the document. The card is, in operating practice, decorative.

That is about to flip.

The structural reasons are three.

One: regulatory frameworks are converging on the model-card as a governance primitive. The EU AI Act's high-risk-system disclosure requirements ask for documentation that maps cleanly to the model-card format. The FTC's emerging AI-disclosure guidance is moving in the same direction. The NIST AI Risk Management Framework treats the model-card-equivalent as a load-bearing artifact. By 2026 the model card is going to be the document the operator is required to produce on demand to a regulator, an auditor, or a plaintiff's attorney. The artifact that nobody reads in 2024 is going to be the artifact everybody has to produce in 2026.

Two: the litigation environment is creating demand from the legal side. The Air Canada chatbot ruling, the early wave of AI-deployment-misstatement lawsuits, the malpractice cases brewing around clinical-AI deployments all turn on what the operator knew about the model's limitations and when. The model card is, in court, the documentary evidence of what the operator was told. The plaintiff's attorney reads the card. The defendant's attorney has to defend against the card. The customer who deployed the model on its claims has to explain why the deployment did not respect the limitations the card named.

Three: the operator's own internal-audit function is starting to require the card. Internal-audit teams at banks, insurers, healthcare systems, and government agencies are building AI-deployment audit checklists in 2024-2025. The checklists ask: did the operator review the model card? Did the operator's deployment respect the use-case scope the card named? Did the operator track changes to the card across vendor model updates? The internal-audit function is, in many cases, ahead of the external regulator on this question, because the audit team's job is to anticipate the regulatory frame before the regulator arrives.

What flips when the model card becomes the governance document everyone reads?

Two things flip operationally.

The first is the labs' model-card discipline. Labs that have been writing model cards as marketing-adjacent disclosure are going to face pressure to write them as legally-defensible compliance artifacts. The format will tighten. The verification process around the card's claims will tighten. The card that says "model performs well on medical-question-answering" will have to be backed by the actual evaluation data, the cohort the evaluation was run on, and the conditions under which the claim holds. Labs that resist the discipline will face litigation discovery they cannot survive.

The second is the operator's deployment governance. The operator who deploys a model has to read the card, document the read, and constrain the deployment to what the card supports. _The card becomes the contract._ A deployment that violates the card's stated limitations is a deployment that creates legal exposure the operator cannot offload to the vendor. The operator who deploys carefully wins the next round of regulatory scrutiny. The operator who deploys aggressively pays the gap.

The structural read in late 2024 is to start treating the model card as the document it is about to become, not the document it currently is. Build the internal process that requires reading the card before deployment. Build the audit trail that documents which version of which card the deployment was based on. Build the governance review that catches deployment-vs-card mismatches before they ship. The cost of doing this in 2024 is small. The cost of not doing it, when the regulatory and litigation environment lands in 2026, is very large.

The thing that crosses pillars is sharpest in healthcare and finance. Healthcare deployments of AI under FDA or CMS scrutiny will be the first place the model card gets read in court. Finance deployments under SEC or CFPB scrutiny will be second. The operators in those categories who are running model-card-blind deployments in 2024 are the operators producing depositions in 2026.

The model card no one reads is, in late 2024, the same artifact the regulatory and litigation infrastructure is, in 2025-2026, going to require operators to know cold. The flip is happening. The trade press will write it up as a series of "regulator demands AI documentation" stories. The part that holds is to be ready before the requirement lands. Read the card. Document the read. Constrain the deployment. Or pay the lawyer who reads it for you, in court.

—TJ