Tool use is the only benchmark that matters after Claude 4 can code for hours unsupervised.

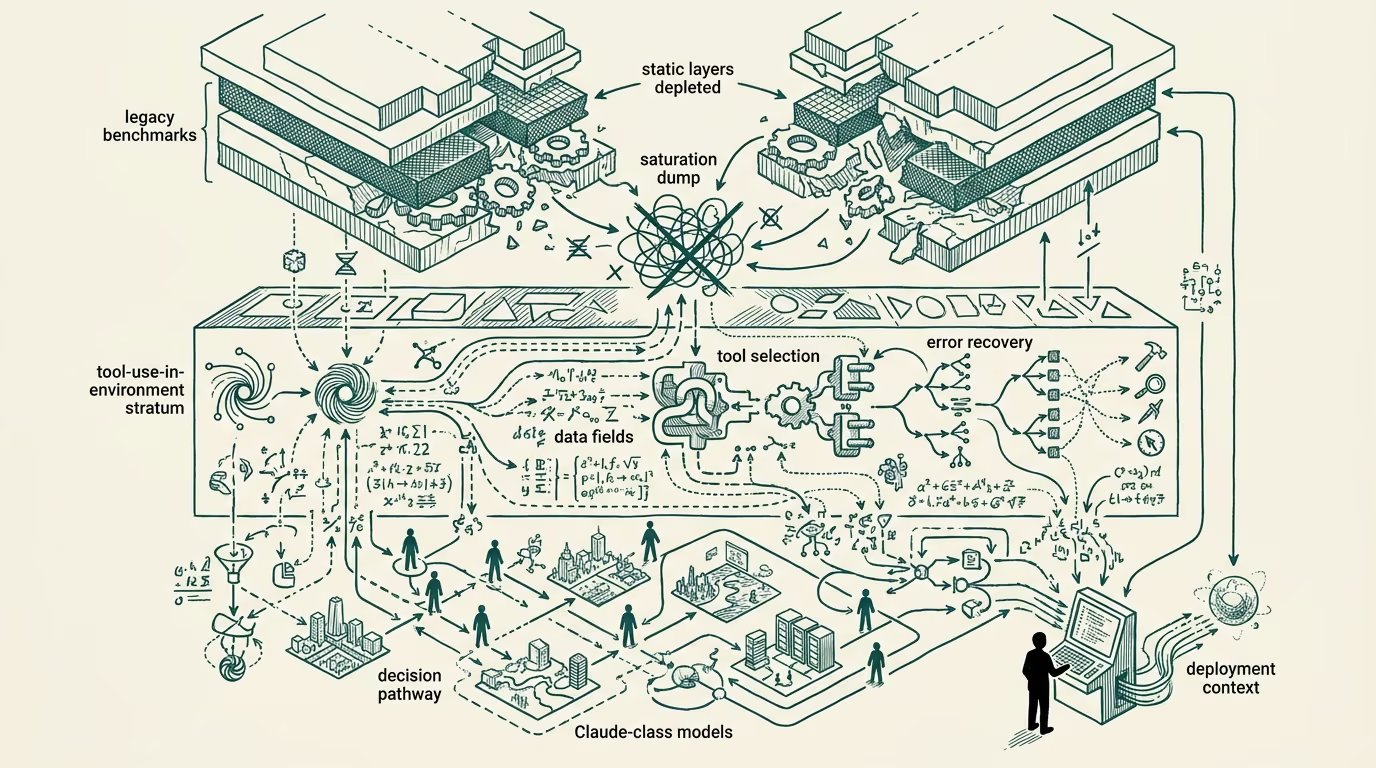

By mid-2025 the legacy AI benchmark suites had been substantially saturated by frontier models. MMLU, HumanEval, GPQA, BIG-Bench, and the broader category of single-turn knowledge-and-reasoning evaluations were producing scores in the 90s for the leading frontier models, with year-old open-weights models reaching the high 80s on the same benchmarks. The benchmarks that had been the standard evaluation framework through 2022-2024 had stopped producing meaningful differentiation across the relevant model population.

The Claude-class capability to operate against a real environment for hours of unsupervised work, with autonomous decision-making about tool selection, error recovery, and multi-step workflow execution, moved the meaningful evaluation question to tool-use-in-an-environment. By the second half of 2025 the only benchmarks that produced meaningful differentiation across frontier models were the tool-use evaluations that measured the model's capability against environmental work rather than against the static-text-question framing the legacy benchmarks used.

The operator-relevant subset of tool-use evaluation is narrower than the broader "agent leaderboard" framing has been signaling. The buyer-class evaluating frontier models for production deployment should attend to the subset, not to the broader leaderboard, because the broader leaderboard generalizes across operating contexts that the buyer's specific deployment does not match. This forecast walks why the legacy benchmarks saturated, what tool-use-in-environment actually measures, the operator-relevant subset, and why the agent-leaderboard temptation produces wrong conclusions.

Why the legacy benchmarks saturated

The legacy benchmarks were designed to measure single-turn knowledge-and-reasoning capability. The model receives a question, produces an answer, the answer is graded against a reference. The benchmarks are useful for measuring whether the model has the relevant knowledge and can reason correctly against the question, with no environmental interaction required.

Through 2022-2024 the benchmarks produced meaningful differentiation across model families. By 2025 the frontier models were achieving high-ninety-percent scores on most of the legacy benchmarks, with the differentiation between models compressed to within the noise of the test-set variance. The benchmarks stopped being useful as model-selection criteria because the variance the buyer cared about was not visible in the score.

The saturation is not a problem with the benchmarks; it is a sign that the benchmarks have done their job. The category has produced models with the knowledge-and-reasoning capability the benchmarks were measuring. The next-generation evaluation needs to measure something else.

What tool-use-in-environment evaluation measures

Tool-use-in-environment evaluations measure the model's capability to operate against a realistic environment with the affordances and constraints the production deployment will face. The model is given access to tools (file system, web browsing, code execution, database queries, API calls), an objective, and a budget of time-or-tool-calls. The evaluation measures whether the model achieves the objective, the quality of the achievement, the efficiency of the tool use, and the failure-and-recovery behavior when tools return errors or unexpected results.

The framing produces meaningful differentiation across frontier models because the capability surface is much larger than the single-turn-knowledge-and-reasoning surface the legacy benchmarks measured. A frontier model that scores within noise of competitors on MMLU may score substantially differently on extended tool-use evaluation, because the tool-use evaluation measures capabilities (planning, error-recovery, tool-selection-judgment, multi-step-coherence, environmental-adaptation) that the legacy benchmarks did not.

The category includes SWE-bench, the various agent-evaluation suites, the OS-World and similar environment-class benchmarks, and the specialty-domain evaluations (medical-diagnostic-with-tools, financial-analysis-with-tools, legal-research-with-tools). The category is rapidly expanding as the operator-class need for tool-use evaluation has surfaced.

The operator-relevant subset

The operator-level evaluating frontier models for a specific production deployment needs to attend to the subset of tool-use evaluation that matches the deployment context. The broader "agent leaderboard" framing produces aggregated scores across heterogeneous evaluation contexts, with the consequence that the leaderboard rankings do not predict the model's performance on the buyer's specific use case.

The operator-relevant subset includes evaluations whose tool-use environment, task structure, and failure-mode distribution match the buyer's deployment. A buyer deploying a code-generation agent should weight SWE-bench-class evaluations heavily and weight non-coding agent evaluations less. A buyer deploying a customer-service agent should weight conversational-multi-turn evaluations heavily and weight code-generation evaluations less. A buyer deploying a clinical-decision-support agent should weight medical-domain tool-use evaluations heavily and weight general-domain evaluations less.

The operator advice is to identify the 2-5 evaluations whose context most closely matches the deployment, evaluate the candidate models against those specifically, and use the broader leaderboard only as background context.

Why the agent leaderboard temptation produces wrong conclusions

The agent-leaderboard framing aggregates across heterogeneous evaluations, producing a single ranking that the trade-press and marketing-class find press-friendly. The single ranking is operationally misleading because it averages across contexts that the buyer's deployment does not match.

A model ranked first on the broader agent leaderboard may be ranked third on the specific evaluations that match the buyer's use case. A model ranked fifth on the broader leaderboard may be ranked first on those specific evaluations. The buyer who selects against the broader leaderboard ranking is selecting against the wrong signal.

The temptation to use the broader leaderboard is real because the operator-relevant subset is harder to identify and weight than the aggregated ranking. The vendor-class also incentivizes the broader leaderboard usage because it allows the vendor to highlight their model's overall position rather than its position on the specific evaluations the buyer's deployment requires.

What this implies

For buyers evaluating frontier models in 2026 and beyond, the practical advice is to invest in identifying the operator-relevant evaluation subset for the buyer's specific deployment, evaluate the candidate models against that subset, and discount the broader agent-leaderboard rankings.

For model vendors, the part that holds is that the buyer-class is increasingly sophisticated about the operator-relevant evaluation question. Vendors that publish performance against the operator-relevant evaluations the buyer's deployment will face have stronger sales engagement than vendors who only publish against the broader leaderboard. The vendor positioning should evolve accordingly.

For investors evaluating the broader AI category, the read is that the model differentiation is increasingly in the tool-use-in-environment dimension rather than in the legacy-benchmark dimension. The investment-class allocation should weight the tool-use evaluation question heavily in the diligence work.

The legacy benchmarks did their job. The tool-use benchmarks are doing the next job. The operator-relevant subset of tool-use evaluation is the framework the buyer-class should be using. The broader agent-leaderboard temptation produces wrong conclusions and should be resisted. Build the diligence framework against the operator-relevant subset; ignore the broader leaderboard except as background context.

—TJ