Yegge burns $100 an hour in parallel. The unit economics are still the question.

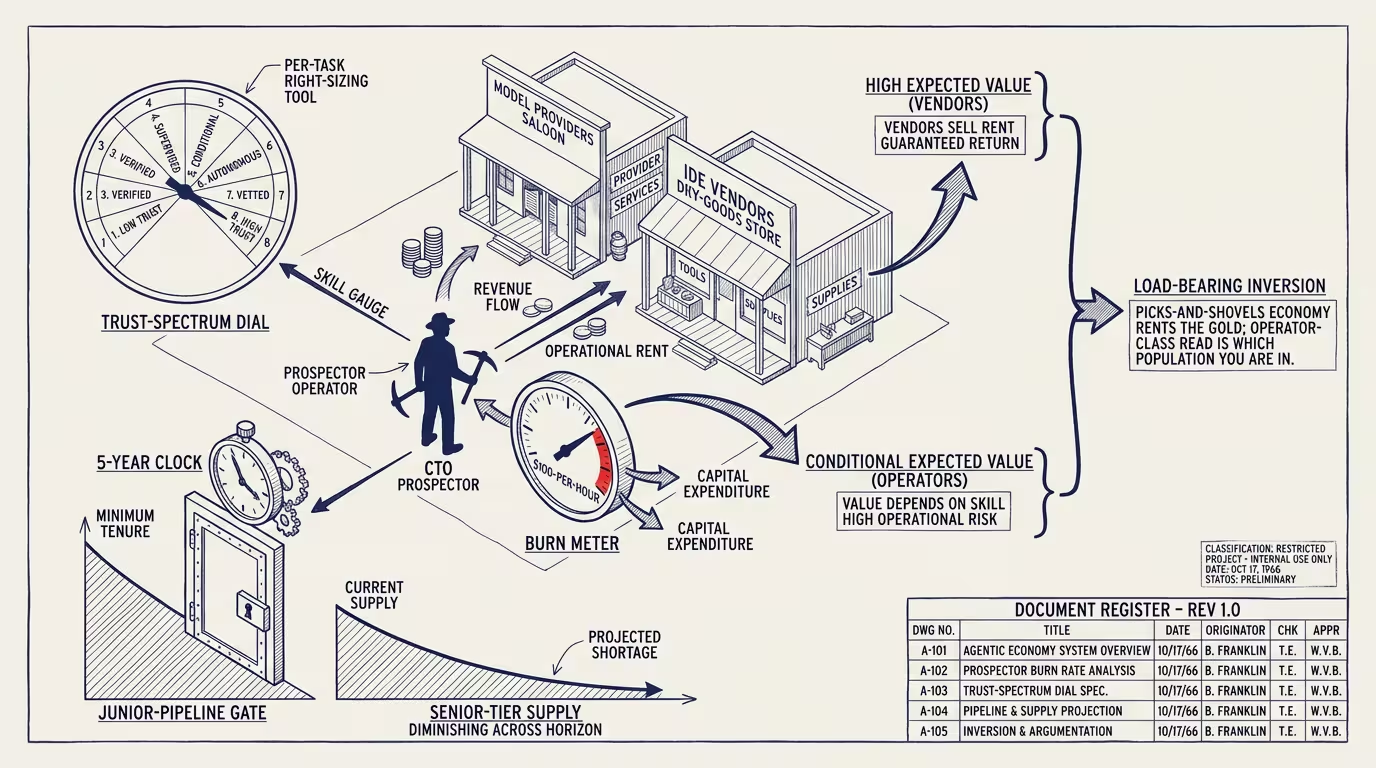

Steve Yegge published two essays in early 2026 that, taken together, are the cleanest operator-class articulation of where agentic coding actually sits in the unit-economics layer. "Welcome to Gas Town" lands the framing: the AI-coding boom is a Gold Rush, the model providers are the saloon and the dry-goods store, and the developers running parallel agents are the prospectors burning capital to extract whatever value the rush is producing. "Wasteland" lands the warning: when the rush ends, or when it just settles into a steady state, the landscape it leaves behind is one where the junior-dev pipeline has been hollowed out and the senior tier is depleting on a five-year clock that nobody in the executive suite has bothered to draw on the whiteboard.

The headline number from the essays is the one the trade press grabbed: roughly $100 per hour of sustained burn rate when running multiple parallel agentic-coding instances against a real codebase. The trade-press read of that number is "agentic coding is too expensive." That reading is wrong by a factor of about 1.5 to 3, and the wrongness is the entire operator-class argument.

A senior engineer in a high-cost-of-living US tech market, fully loaded with benefits, equity, infrastructure, and overhead, costs the employer somewhere between $150 and $300 per hour. The $100/hour Yegge reports is below the median of fully-loaded senior cost. That number is not the cost ceiling on agentic coding; it is the cost floor of human alternatives, and the agentic version is already underneath it. The trade press reads it as expensive because the trade press has been comparing it to the marginal cost of an open-source IDE, which is zero. The right comparison is to the loaded cost of the human the agent is replacing, augmenting, or accelerating. Against that comparison, $100/hour is the bargain layer.

That math is the floor of the structural read. The ceiling is everything else Yegge writes about, and the everything-else is what the trade press hasn't gotten to yet.

Here is the historical analogy that makes the rest of the operator argument legible.

The 1849 California Gold Rush had two distinct economic populations. The first was the miners. They came west, paid speculative entry costs, dug, and produced value that, in expectation across the population, was barely sufficient to cover the cost of being there. A small percentage of miners struck rich. Most broke even or worse. The Gold Rush did not, on aggregate, make miners rich.

The second population was the picks-and-shovels economy. Levi Strauss sold dry goods. Wells Fargo banked the gold. Studebaker, before becoming a car company, made wheelbarrows. The towns that supplied the miners (San Francisco, Sacramento, the smaller boom-towns dotting the Sierra foothills) captured durable infrastructure value that survived the rush. The picks-and-shovels operators got rich with high probability. The miners got rich with low probability. Both populations were participating in the same Gold Rush. The expected returns were operating on completely different distributions.

Yegge's "Gas Town" is the same economic shape mapped onto agentic coding in 2026. Anthropic, OpenAI, and Google DeepMind are the model providers; that's the dry-goods store. Cursor, Sourcegraph, and Windsurf form the IDE-class layer; that's the saloon. The operator running parallel agents at $100/hour is the prospector. The picks-and-shovels economy in 2026 is unambiguously where the durable value is accruing. Anthropic's revenue ramp, OpenAI's enterprise-tier expansion, the IDE-vendor valuations through 2025: all of that is the saloon getting rich while the prospectors burn through their capital trying to find the seam.

That is not, by itself, an argument against being the prospector. Some prospectors did get rich. The argument is that the operator class needs to know which population it is in. The CTO authorizing the $100/hour burn is the prospector. The saloon is taking the cut every hour, with very high probability of capturing positive expected value. The prospector is capturing positive expected value only conditional on a set of operating-skill factors that the trade-press version of this story doesn't surface.

What are those factors?

The first one is what Yegge spends the central section of "Wasteland" on, and it is the part the operator class is most likely to mis-execute. Yegge's eight-level developer-trust spectrum runs from level-one (developer reads every line, treats agent as autocomplete) to level-eight (developer specifies intent, agent ships to production with minimal review). That spectrum is not a ladder you climb. The trade-press read is that the operator goal is to migrate the team up the spectrum: get to level-six, capture the productivity yield, justify the burn. That reading is a misreading.

The actual structural reading is that the spectrum is a dial you set per task. A migration script that affects no production traffic and is instantly reversible can run at level-seven. A change to authentication middleware in a system serving regulated data should run at level-two regardless of how much agentic-trust the team has accumulated. Same engineer, same agent, same toolchain. But the trust-level is a property of thetask, not a property of theteam. Operators trying to migrate the whole team to level-six are over-trusting on the high-stakes work and under-trusting on the low-stakes work. Both errors burn $100/hour without producing the yield that justifies it.

The right-sizing skill is the new senior-engineer skill. It is taxonomy work: looking at a task and deciding which trust level it warrants, then configuring the agent loop for that level. That work is invisible in the press coverage because it is not visible in the IDE; it lives in the operator's head and in the team's review processes. Operators who develop the right-sizing capacity capture the productivity yield. Operators who don't are the ones the trade press will be writing about in 2027 when the agentic-coding deployments fail to deliver the projected gains.

The second factor is the bottleneck migration. For about forty years the bottleneck on producing software was typing speed at the very low end and design speed at the very high end. Through the 2010s the bottleneck moved to coordination: getting the right humans aligned on the right specifications, getting code through code review, managing dependencies across teams. Agentic coding does not solve coordination, but it changes which sub-problems coordinate around what. The new bottleneck (and Yegge calls this out explicitly in both essays) is context curation. The agent will produce useful output only if the context window contains the right pieces of the codebase, the right examples of the team's conventions, the right test fixtures, the right architectural notes. Producing that context is a real engineering skill. It is the new typing speed. Teams that have invested in context-curation infrastructure (repository-class indexing, pattern-class examples, convention documentation the agent can actually read) capture the productivity yield. Teams that haven't are running their parallel agents against context windows that produce subtly-wrong output that costs them downstream review time.

That brings us to the eval gap, which is Yegge's quietest point and his most important one. Most teams running agentic coding in 2026 have no objective measure of the output quality. They have qualitative impressions. They have anecdotes. They have a vague sense that the agent is "working." They do not have evals that load into the unit-economics math. Without evals, the $100/hour is producing artifacts whose quality cannot be measured against the artifacts the team would have produced without agents. The burn is real; the productivity claim is unverified at the team level. Operators who have not invested in eval infrastructure cannot answer the basic operator question: is this paying off?

The third factor is the one Yegge frames the whole "Wasteland" essay around. The current generation of senior engineers (the ones running the parallel agents, the ones doing the right-sizing work, the ones building the context infrastructure) were trained in a now-defunct apprenticeship system. They were juniors in 2010, mid-levels in 2015, seniors in 2020. The pipeline that produced them was: hire juniors, expose them to senior-led code review, let them grind through the unproductive years where they're net-negative on team output, watch them transition into the productive senior tier on a five-to-eight-year arc. That pipeline is being aggressively cut in 2025-2026. Big Tech is cutting junior hiring because the seniors-with-agents combination is, on a one-quarter-window measurement, producing more output than the seniors-with-juniors combination. The math works on the quarterly-earnings cycle. The math does not work on the five-to-eight-year clock for replacing the senior tier.

In 2031, the firms that cut junior hiring in 2025 will have a senior tier that is one-third smaller than it would have been, and they will not be able to import their way out of the gap because every other firm that cut junior hiring will be in the same position. The senior labor market in 2031 will have a price discontinuity. The firms that kept hiring juniors through the 2025-2026 window, even at apparent productivity cost, will be the ones with senior-tier supply that they actually own. That is the operator-tier arbitrage Yegge is naming. The trade press isn't writing it because the trade press operates on a one-quarter window. The CFOs aren't running the math because the math doesn't show up in 2026's earnings calls.

What follows from all of this for an operator authorizing the $100/hour burn?

The burn is a bargain at the surface number, conditional on three operating capacities the team needs to actually possess. The team has to know how to right-size trust level per task. The team has to have built context-curation infrastructure that lets the agents produce useful output. The team has to have evals that let the operator verify the productivity claim is real forthis team on these workflows. Operators with those three capacities are the prospectors who actually find gold. Operators without them are the prospectors burning capital to enrich the saloon.

Two structural recommendations sit on top of that.

The first is to keep hiring juniors through the productivity-argument window, on the explicit theory that the 2031 senior-tier shortage is a real and pricing-able event. The cost of carrying juniors through 2026-2028 is paid in the same currency as agent burn rate. The benefit is a senior tier that exists in 2031 when most of the industry's doesn't. That trade is operator arbitrage with a five-year horizon and a real expected payoff. Most firms will not take it because most firms are running the one-quarter math.

The second is to read the picks-and-shovels economy clearly. The model providers are the saloon. The IDE vendors are the dry-goods store. The infrastructure layer that isn't yet built (the eval-class infrastructure, the context-curation tooling, the trust-level-routing systems) is the picks-and-shovels economy that has not yet emerged. That layer is where the next set of durable operator-tier businesses get built. Operators with the platform-class capacity to build into that layer have a multi-year window to capture infrastructure value before the layer commodifies. By 2028 the layer will exist; the question is who builds it.

Yegge burns $100 an hour in parallel. He captures the productivity yield because he is staffed at the operator level: context curation, eval infrastructure, trust-level right-sizing, and a personal commitment to the long-arc bet on the junior pipeline. Most teams are not staffed at that level and are paying the same hourly rate to enrich the saloon. The unit economics differ by an order of magnitude depending on which population the team is in. The trust level is a variable; the burn rate is a fixed cost; the picks-and-shovels economy is a structural fact; the senior-tier shortage is a five-year clock that started in 2025 and that nobody is winding back.

Read both essays. The $100/hour number is the headline. The structural read is everything underneath it.

—TJ