Even the AGI bulls are quietly long adoption risk.

Dario Amodei's "Machines of Loving Grace" projects a hundred years of medical progress compressed into a decade once powerful AI arrives. The essay is the most-cited frontier-lab CEO healthcare-optimism document of 2024. The argument runs through neuroscience, oncology, cardiovascular, and rare disease. The timeline is bold. The biology is, in the essay's framing, ready.

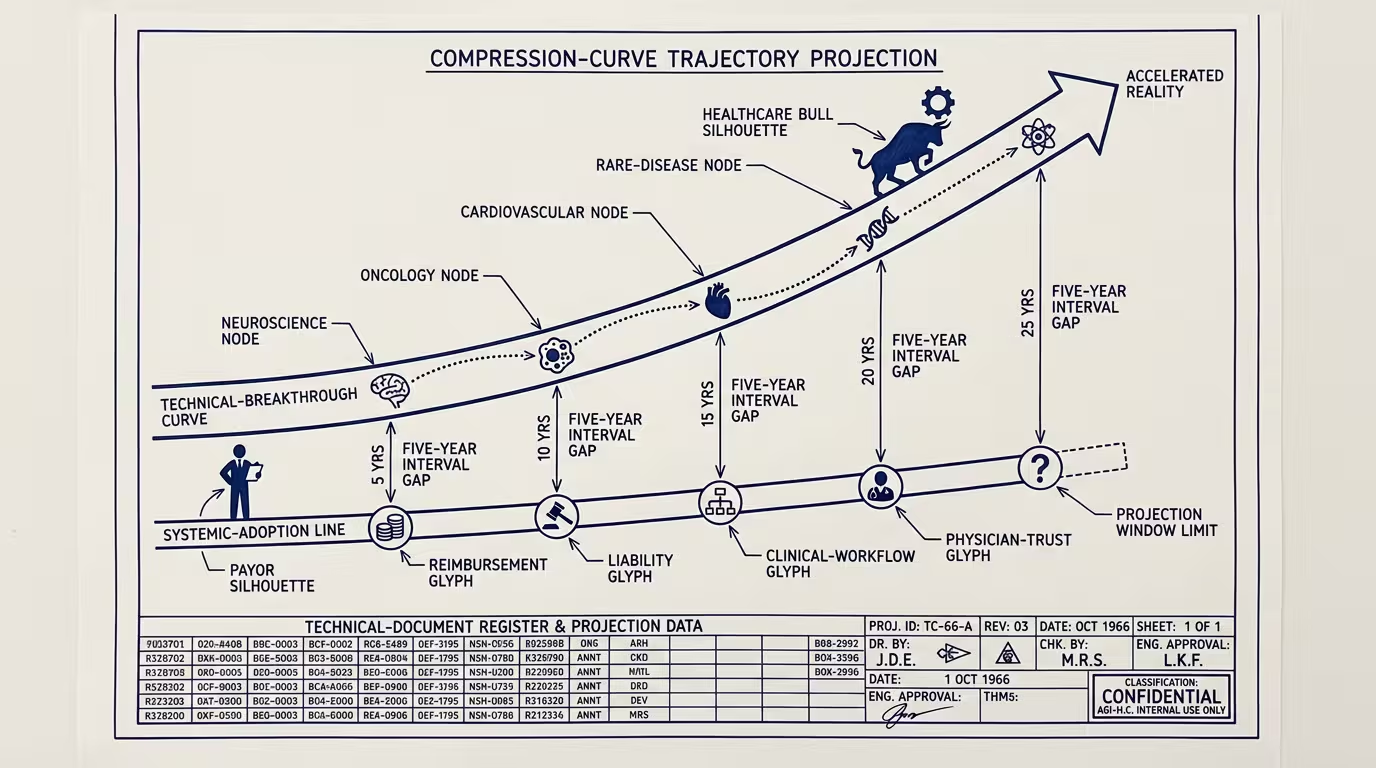

The hidden assumption is the load-bearing one. Amodei assumes AI compresses _both_ technical breakthrough and systemic adoption. The technical side may compress on the model-capability curve. The systemic side does not. _Even the AGI bulls are quietly long adoption risk._

The systemic side has five gates, none of which scale on the model-cost-decline curve, all of which sit between the discovery and the patient.

Reimbursement is the first. A new therapy, a new diagnostic, a new clinical-AI-driven intervention has to reach the patient through a payer that pays for it. The payer's coverage decision is downstream of HEDIS measures, of clinical-evidence accumulation, of the regulatory body's review timeline, and of the negotiation cycle between the manufacturer and the payer. None of those compress because Anthropic has a faster model. CMS coverage decisions take, on average, 24-48 months from FDA approval to consistent payer coverage. Private payers follow CMS on a 6-12 month lag. The therapy that exists in 2026 reaches the patient at scale in 2030.

Liability is the second. The clinician who prescribes a new AI-assisted treatment carries the malpractice exposure if the treatment harms the patient. The malpractice insurer prices that exposure based on actuarial data. The actuarial data does not exist for novel AI-assisted treatments until the first wave of cases produces claims, settlements, and litigation. The lag is structurally 5-7 years. The clinician who waits for the liability picture to clarify is not adopting on Amodei's timeline. The clinician who adopts early is paying premium-grade premiums on uncertainty, and most don't.

Clinical workflow is the third. A new diagnostic that requires a new test order, a new sample-collection protocol, a new EHR field, a new physician-review step is a workflow change. Workflow changes in clinical practice compound at roughly 18-24 months per change, because the clinical staff has to be trained, the EHR has to be updated, the billing code has to be in place, and the prior-authorization apparatus has to know about it. AI does not compress that timeline. The hospital that wants to deploy the new AI-assisted workflow is, on the available evidence, looking at 18-24 months of operational change, every time.

Physician trust is the fourth and perhaps the slowest. Physicians adopt new treatments through the same social-proof mechanism that has governed clinical practice for a hundred years: peer recommendation, conference presentation, journal publication, the slow accumulation of the "I've used it on 50 patients and it works" anecdote-corpus. That mechanism does not run on the model-capability curve. The 100-years-in-a-decade compression Amodei is projecting requires every clinician in the U.S. to absorb a new piece of clinical knowledge faster than the published-evidence-base can accumulate. That is not how clinical practice works.

Regulatory pacing is the fifth. The FDA's review pipeline operates at roughly 18-24 months for novel therapies, longer for novel devices, longer still for novel diagnostic AI. The FDA can move faster, has historically done so under accelerated-pathway frameworks, and is investing in AI-specific review processes. None of that gets the regulatory pipeline to the velocity Amodei's timeline implies. The regulatory infrastructure compresses on a 5-10x curve over 20 years. Not on a 100-year-in-a-decade curve over the next ten.

These five together are the adoption layer the essay does not engage with. The technical layer may compress. The adoption layer does not. The compression at the technical layer produces a backlog at the adoption layer, and the backlog is where the operator-class story actually lives.

The cohort cost of the gap is real and is paid by the patient. A novel cancer therapy that exists technically in 2026 but is not reimbursed until 2030 is a therapy that is, for a four-year window, available to private-pay patients who can afford the manufacturer's launch price. The cohort that cannot afford the launch price waits for the reimbursement decision. Some of that cohort dies waiting. The Amodei timeline does not name this gap. The gap is, of course, where the operating economics of healthcare-AI deployment actually have to be modeled.

Underneath the trade-press read, the structural read is sharper. The trade-press read is that healthcare-AI is on a steep capability curve and the operator should bet aggressively. The part that holds is that the capability curve and the adoption curve are decoupled, the adoption curve is the load-bearing variable, and the operator who bets aggressively on the capability curve without modeling the adoption curve is the operator whose 2027 deployment is technically capable, regulatorily uncleared, reimbursement-uncovered, and clinically-unintegrated.

What the operator should bet on instead is the adoption-acceleration layer. The infrastructure that compresses the reimbursement timeline. The data partnerships that produce the actuarial evidence the malpractice insurers need. The EHR-vendor relationships that cut workflow-change cycle time. The clinician-trust-building mechanisms (registries, post-market surveillance, peer-reviewed real-world-evidence) that accelerate the social-proof curve. The regulatory-affairs investments that get the FDA review timeline down. None of those are AI-specific investments. All of them are the structural conditions on which AI's clinical impact depends. The healthtech operator who invests in adoption-acceleration in 2024-2025 is the operator whose 2027 deployment actually reaches patients. The operator who invests only in capability is the operator whose deployment is impressive in the demo and inert in the field.

Even the AGI bulls are quietly long adoption risk. Amodei is right that the capability curve is steep. He is silent on the part that decides whether the curve translates into outcomes. The operator who doesn't fill in that silence is the operator paying the gap.

The cohort waiting for the reimbursement decision pays the gap too, of course, in the years they wait, in the outcomes that arrive late, in the trajectories that look the way they always have because the deployment hadn't reached the field. That is the part the AGI-bull frame does not say out loud. The gap exists. It is, for the next decade, where the actual healthcare-outcome impact of AI gets decided.

—TJ