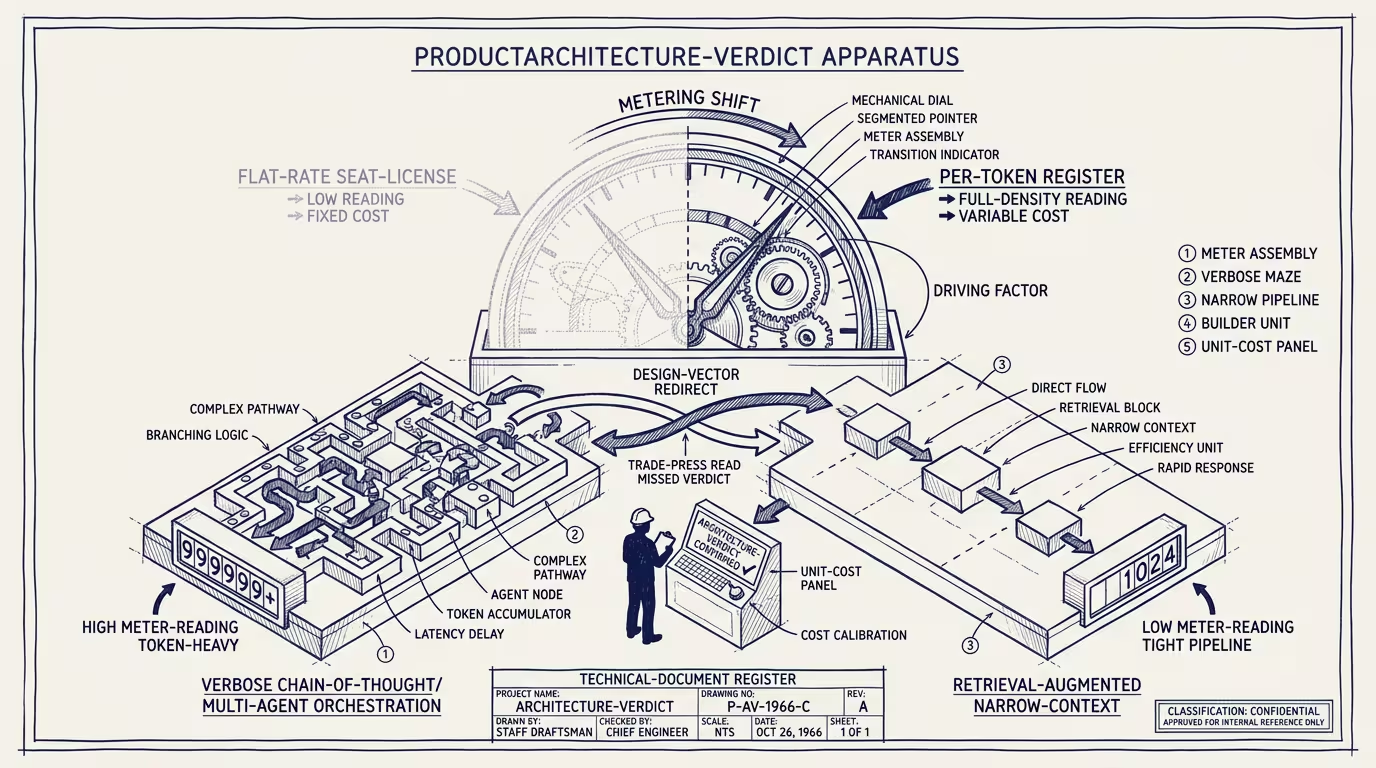

Per-token pricing isn't a billing change. It's a product-architecture verdict.

Anthropic shifted enterprise pricing from flat-rate seat licensing to per-token billing in mid-Q2 2026. The trade press wrote it as a billing-model adjustment. The structural read is sharper.

_Per-token pricing isn't a billing change. It's a product-architecture verdict._

What does the new regime reward, and what does it penalize? The reward side captures applications that minimize tokens per outcome — retrieval-augmented architectures that hit the LLM with focused context, narrow-context single-pass approaches that produce direct answers, curated domain knowledge bases that pre-process documents before the LLM ever sees them. Operators on this side see per-outcome cost compress 10-50x relative to legacy approaches.

The penalty side captures three architectural patterns. Verbose chain-of-thought generates explicit reasoning traces (the chain-of-thought prompting popularized in 2022-2023) that consume substantially more tokens than direct-answer applications. The reasoning quality often improves; the per-outcome cost rises 3-10x. Per-token pricing makes the cost increase visible at the bill, and operators have to decide whether the reasoning-quality improvement justifies the cost premium. For most production deployments, the answer is no — operators will compress chain-of-thought to retrieval-augmented architectures that hit comparable outcomes with fewer tokens. Multi-agent orchestration decomposes tasks into multiple agent steps, each generating its own context window and consuming its own tokens; under per-token pricing, the orchestration tax is operationally visible and operators will collapse multi-agent architectures into narrower single-pass approaches. General-purpose LLM reasoning at scale sends raw documents to the LLM and asks it to reason over the full content, consuming tokens proportional to document length; applications that pre-process documents into curated knowledge bases hit the LLM with focused context that's 10-100x smaller and capture the cost advantage.

What sorts the operator-class winners and losers under the new regime? Companies with curated, well-structured domain data (healthcare-AI with EHR-mapped clinical data, legal-AI with case-law knowledge bases, finance-AI with structured market data) deploy retrieval-augmented architectures that hit per-outcome token consumption 10-50x lower than general-purpose alternatives. The cost advantage compounds across query volume. Operators with domain-data-as-moat see their unit economics improve under per-token pricing; operators competing on general-purpose capability see their unit economics compress.

What flows from the architectural verdict at the category level? The shift accelerates the move toward integration-layer businesses. The product-architecture changes per-token pricing rewards (RAG, narrow context, curated knowledge bases) are integration-layer work. Operators capable of executing the architecture capture margin advantage; operators dependent on general-purpose LLM-class reasoning absorb the per-token tax. The category-leader integration businesses (the Salesforce-class enterprise integrators, the vertical-specific platforms with curated domain data) capture the operator-tier advantage that the pricing regime makes structural.

Will the verdict compound? Likely yes within 12-18 months. OpenAI, Google, Meta will likely converge on per-token-class pricing models for enterprise tiers as the operator precedent stabilizes. The architectural pressure becomes industry-wide. Operators who reweight architecture in 2026 are calibrated to the multi-vendor per-token-pricing future. Operators who delay the architectural pivot are pricing against the legacy flat-rate assumption that's structurally degrading.

The same shape recurs in pricing-regime changes that drive product-architecture changes. Capability changes shift what's possible; pricing changes shift what's profitable. Operators tracking AI-strategic-direction should be reading pricing-regime announcements as architectural-direction signals, not as billing-mechanics noise.

Per-token pricing isn't a billing change. It's a product-architecture verdict. The verdict rewards retrieval-augmented, narrow-context, curated-knowledge-base architectures. The verdict penalizes verbose chain-of-thought, multi-agent orchestration, and general-purpose-LLM-reasoning-at-scale architectures. Operators reading the verdict correctly are building toward the rewarded patterns. Operators reading it as a billing change are absorbing the architectural tax that the regime makes operationally visible.

—TJ