Pick a substrate. The decade's moat is downstream.

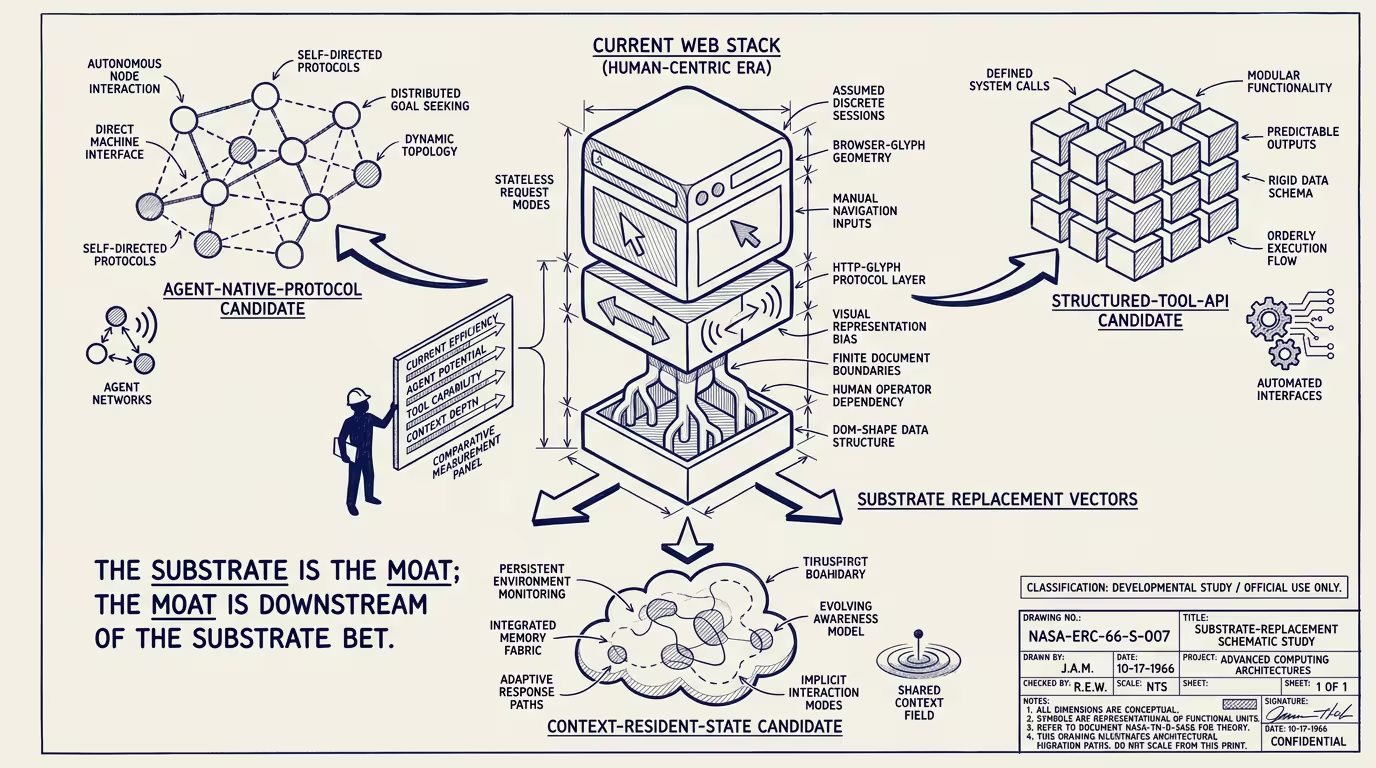

Karpathy's Software 3.0 frame, articulated in his June 2025 YC AI Startup School keynote and refined through subsequent essays, argues that the modern web stack was built for human developers, not for AI consumers, and is therefore the wrong substrate for the next decade of AI-native applications. The framing implies that whichever substrate emerges to replace HTTP-class human-developer-oriented infrastructure captures decade-class moat returns at the operator-class layer.

This is a futurist forecast piece. The forecast is conditional on the substrate-replacement dynamic playing out as Karpathy's framing implies, on no single existing protocol class capturing the substrate role through extension rather than replacement, and on the frontier-lab class supporting the new substrate rather than building closed-substrate alternatives. Each conditional is contestable.

The operator-grade signal that distinguishes a substrate bet from a layer-on-top bet is, in operating terms, the data-and-protocol ownership question. _A substrate bet owns the protocol or the data graph. A layer-on-top bet operates on someone else's protocol or data graph._ That distinction is the load-bearing structural read for everything that follows. It decides whether the operator captures substrate-class moat returns or layer-class commoditized returns. It decides whether the capital deployed compounds across capability cycles or runs to zero on the next protocol shift. It decides which game the company is playing.

Hold that distinction in view. Three candidate substrates have credible claims to capturing the decade's moat downstream, each measured against the ownership test.

Agent-runtime-protocol substrates —MCP (Model Context Protocol, shipped by Anthropic November 2024 and adopted across the frontier-lab class through 2025-2026), the agent-to-agent communication protocols emerging from the A2A working groups, and the related agent-runtime infrastructure — are operator-class-positioned to handle the AI-to-AI interaction layer that the HTTP stack does not handle natively. Operators building on these substrates capture the substrate moat if the substrate becomes load-bearing for the next decade's AI applications. The bet is that the substrate becomes load-bearing rather than getting absorbed into a closed-frontier-lab alternative.

Structured-output and provenance substrates — emerging conventions for cryptographically-signed AI outputs, content-provenance metadata (C2PA-class), and structured-output APIs that are AI-native rather than human-developer-native — capture the trust-layer infrastructure that the post-2024 trust-stack failure (NYT blind test, AI-content procurement contamination) creates demand for. Operators building substrate-class positions in this layer capture moat-class returns if the trust-layer demand materializes at scale and the conventions stabilize.

Data-graph-substrate platforms — longitudinal-data-graph operators across categories, with healthcare-AI longitudinal patient data, finance-AI customer-transaction graphs, and education-AI learner-progress graphs each running specialized AI on top of proprietary domain data — are not a single shared infrastructure but a category-by-category data-substrate replication of the Airbnb specialized-AI playbook. Operators with category-specific data graphs at scale capture substrate-class moat in their categories.

The ownership test cuts cleanly through all three. The operator who owns the protocol or the data graph is making a substrate bet; the operator who builds against someone else's protocol or graph is making a layer bet. The first pays out on a decade-class horizon and requires capital deployment that compounds across multiple capability cycles. The second pays out on a 2-4 year horizon and is more capital-efficient per dollar of return. Operators have to pick which game they are playing — most are playing layer games and benchmarking themselves against substrate-bet operators, which produces investment theses that are operating-incoherent across the relevant time-horizon mismatch.

The forecast itself runs on two contingencies that the ownership test does not resolve. The first is whether the existing HTTP stack absorbs AI-native extensions adequately (through evolved versions of REST APIs, JSON-RPC extensions, OAuth-class extensions for agent identity); if it does, substrate bets underperform layer bets across the decade. The second is whether OpenAI, Anthropic, Google, and Meta each build closed-substrate alternatives that split the substrate-emergence scenario across multiple closed substrates rather than converging on a single open one; the split outcome reduces substrate-class returns to whichever operators get inside the dominant closed substrate, with everyone else absorbing layer-class returns. Both contingencies are partially-resolvable through 2026-2027 as MCP adoption rates and frontier-lab protocol-class moves become measurable. Operators tracking the contingencies closely have better calibration on which game to play.

The thing that crosses pillars is that the substrate-vs-layer question recurs across every operator-level capital-allocation decision in AI-adjacent categories through 2026-2030. The question is binary at the strategy level and contingent at the forecast level. Operators with substrate positions absorb the contingency-resolution outcome at high amplitude. Operators with layer positions absorb at low amplitude. The decision is, in operating terms, a risk-tolerance question rather than a forecasting accuracy question.

The read that survives is that the substrate-emergence question is one of the load-bearing decade-class forecast questions for AI-adjacent operators, the three substrate candidates named here have credible claims under specific contingency configurations, and the operator discipline is to make the substrate-vs-layer choice explicit rather than to default to layer bets while modeling substrate-class returns in the projection. The operators making implicit bets across the spectrum are absorbing forecast-uncertainty without compensating operator-grade commitments.

Pick a substrate. The decade's moat is downstream of the substrate that emerges. The forecast cuts both ways on the substrate-emergence question. Operators with explicit positions on either side are operating-coherent. Operators without explicit positions are absorbing the contingency without making the bet that the contingency rewards.

—TJ